Giving and taking objects to and from humans are fundamental capabilities for collaborative robots in a variety of applications. NVIDIA researchers are hoping to improve these human-to-robot handovers by thinking about them as a hand grasp classification problem.

In a paper called “Human Grasp Classification for Reactive Human-to-Robot Handovers“, researchers at NVIDIA’s Seattle AI Robotics Research Lab describe a proof of concept they claim results in more fluent human-to-robot handovers compared to previous approaches. The system classifies a human’s grasp and plans a robot’s trajectory to take the object from the human’s hand.

To do this, the researchers developed a perception system that can accurately identify a hand and objects in a variety of poses. This was no easy task, they said, as the hand and object are often obstructed by each other.

How the system works

To solve the problem, the team broke the approach into several phases. The researchers defined a set of grasps that describe the way the object is grasped by the human hand. The types of grasps were defined as “on-open-palm,” “pinch-bottom,” “pinch-top,” “pinch-side,” and “lifting.”

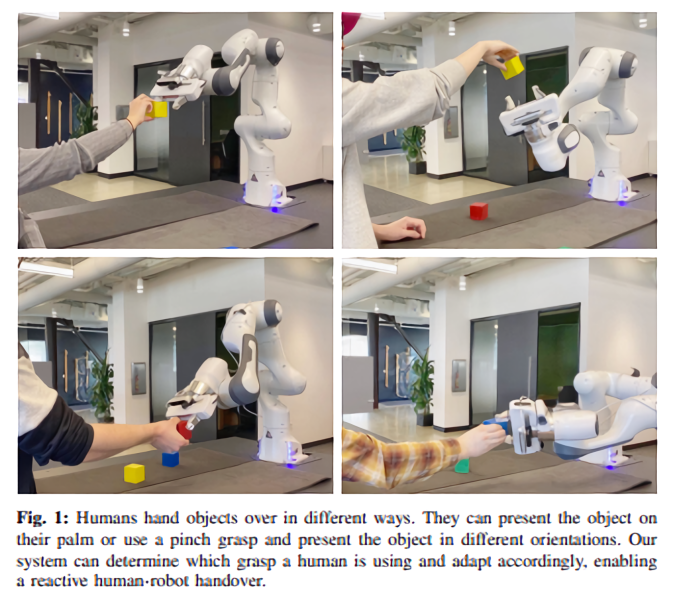

“Humans hand objects over in different ways,” the researchers write. “They can present the object on their palm or use a pinch grasp and present the object in different orientations. Our system can determine which grasp a human is using and adapt accordingly, enabling a reactive human-robot handover. If the human hand isn’t holding anything, it could be either waiting for the robot to handover an object or just doing nothing specific.

An overview of NVIDIA’s handover framework. The framework takes the point cloud centered around the hand detection, and then uses a model inspired by PointNet++ to classify it as one of several grasp types that cover various ways objects tend to be grasped by the human user. The task model will then plan the robot grasps adaptively.

Then they created a dataset of 151,551 images that covered eight subjects with various hand shapes and hand poses using an Azure Kinect RGBD camera. “Specifically, we show an example image of a hand grasp to the subject, and record the subject performing similar poses from twenty to sixty seconds. The whole sequence of images are therefore labeled as the corresponding human grasp category. During the recording, the subject can move his/her body and hand to different positions to diversify the camera viewpoints. We record both left and right hands for each subject.”

Related: Inside NVIDIA’s Seattle-based Robotics Research Lab

Finally, the robot adjusts its orientation and trajectory based on the human grasp. NVIDIA did this by training a human grasp classification network using PointNet++. Instead of using convolutional neural networks on depth images, the researchers used PointNet++ for its efficiency and success on robotics applications such as marker-less teleoperation systems and grasps generation.

The handover task is modeled as a Robust Logical-Dynamical System. This is an existing approach that generates motion plans that avoid contact between the gripper and the human hand given the human grasp classification. The system was trained using one NVIDIA TITAN X GPU with CUDA 10.2 and the PyTorch framework. The testing was done with one NVIDIA RTX 2080 Ti GPU.

Future improvements of human-to-robot handovers

To test their system, the researchers used two Franka Emika cobot arms to take colored blocks from a human’s grasp. The robot arms were set up on identical tables in different locations. According to the researchers, the system had a grasp success of 100% and a planning success rate of 64.3%. It took 17.34 seconds to plan and execute actions versus the 20.93 seconds of a comparable system.

However, the researchers admit a limitation of their approach is it applies only to a single set of grasp types. In the future, the system will learn different grasp types from data instead of using manually-specified rules.

“In general, our definition of human grasps covers 77% of the user grasps even before they know the ways of grasps defined in our system,” wrote the researchers. “While our system can deal with most of the unseen human grasps, they tend to lead to higher uncertainty and sometimes would cause the robot to back off and replan. … This suggests directions for future research; ideally we would be able to handle a wider range of grasps that a human might want to use.”

Five human grasp types with two empty hand types. This cover various ways objects tend to be grasped by humans. | Credit: NVIDIA

Tell Us What You Think!