NVIDIA CEO Jensen Huang (left) and Senior Director of Robotics Research Dieter Fox at NVIDIA’s robotics lab.

The Robot Report named NVIDIA a must-watch robotics company in 2019 due to its new Jetson AGX Xavier Module that it hopes will become the go-to brain for next-generation robots. Now there’s even more reason to keep an eye on NVIDIA’s robotics moves: the Santa Clara, Calif.-based chipmaker just opened its first full-blown robotics research lab.

Located in Seattle just a short walk from the University of Washington, NVIDIA’s robotics lab is tasked with driving breakthrough research to enable next-generation collaborative robots that operate robustly and safely among people. NVIDIA’s robotics lab is led by Dieter Fox, senior director of robotics research at NVIDIA and professor in the UW Paul G. Allen School of Computer Science and Engineering.

“All of this is working toward enabling the next generation of smart manipulators that can also operate in open-ended environments where not everything is designed specifically for them,” said Fox. “By pulling together recent advances in perception, control, learning and simulation, we can help the research community solve some of the greatest challenges in robotics.”

The 13,000-square-foot lab will be home to 50 roboticists, consisting of 20 NVIDIA researchers plus visiting faculty and interns from around the world. NVIDIA wants robots to be able to naturally perform tasks alongside people in real-world, unstructured environments. To do that, the robots need to be able to understand what a person wants to do and figure out how to help achieve a goal.

The idea for NVIDIA’s robotics lab came in the summer of 2017 in Hawaii. Fox and NVIDIA CEO Jensen Huang met at CVPR, an annual computer vision conference, and discussed the exciting areas and difficult problems ongoing in robotics.

“NVIDIA dedicates itself to solving the very difficult challenges that computing can solve. And robotics is unquestionably one of the final frontiers of artificial intelligence. It requires the convergence of so many types of technologies,” Huang told The Robot Report. “We wanted to dedicate ourselves to make a contribution to the field of robotics. Along the way it’s going to spin off all kinds of great computer science and AI knowledge. We really hope the technology that will be created will allow industries from healthcare to manufacturing to transportation and logistics to make a great advance.”

NVIDIA said there are about a dozen projects currently underway, and NVIDIA will open source its research papers. Fox said NVIDIA is primarily interested, early on at least, in sharing its software developments with the robotics community. “Some of the core techniques you see in the kitchen demo will be wrapped up into really robust components,” Fox said.

We attended the official opening of NVIDIA’s robotics research lab. Here’s a peek inside.

Mobile manipulator in the kitchen

NVIDIA’s mobile manipulator includes a Franka Emika Panda cobot on a Segway RMP 210 UGV. (Credit: NVIDIA)

The main test area inside NVIDIA’s robotics lab is a kitchen the company purchased from IKEA. A mobile manipulator, consisting of a Franka Emika Panda cobot arm on a Segway RMP 210 UGV, will try its hand at increasingly difficult tasks, ranging from from retrieving objects from cabinets to learning how to clean the dining table to helping a person cook a meal.

During the open house, the mobile manipulator consistently fetched objects and put them in a drawer, opening and closing the drawer with its gripper. Fox admitted this first task is somewhat easy. The robot uses deep learning to detect specific objects solely based on its own simulation and doesn’t require any manual data labeling. The robot uses the NVIDIA Jetson platform for navigation and performs real-time inference for processing and manipulation on NVIDIA TITAN GPUs. The deep learning-based perception system was trained using the cuDNN-accelerated PyTorch deep learning framework.

Fox also made it clear why NVIDIA chose to test a mobile manipulator in a kitchen. “The idea to choose the kitchen was not because we think the kitchen is going to be the killer app in the home,” said Fox. “It was really just a stand in for these other domains.” A kitchen is a structured environment, but Fox said it is easy to introduce new variables to the robot in the form of more complex tasks, such as dealing with unknown objects or assisting a person who is cooking a meal.”

Deep Object Pose Estimation

NVIDIA Deep Object Pose Estimation (DOPE) system. (Credit: NVIDIA)

NVIDIA introduced its Deep Object Pose Estimation (DOPE) system in October 2018 and it was on display in Seattle. With NVIDIA’s algorithm and a single image, a robot can infer the 3D pose of an object for the purpose of grasping and manipulation. DOPE was trained solely on synthetic data.

One of the key challenges of synthetic data is the ability to bridge the reality gap so that networks trained on synthetic data operate correctly with real-world data. NVIDIA said its one-shot deep neural network, albeit on a limited basis, has accomplished that. The system approaches its grasps in two steps. First, the deep neural network estimates belief maps of 2D keypoints of all the objects in the image coordinate system. Next, peaks from these belief maps are fed to a standard perspective-n-point (PnP) algorithm to estimate the 6-DoF pose of each object instance.

Read our interview about the DOPE system with Stan Birchfield, a Principal Research Scientist at NVIDIA, here.

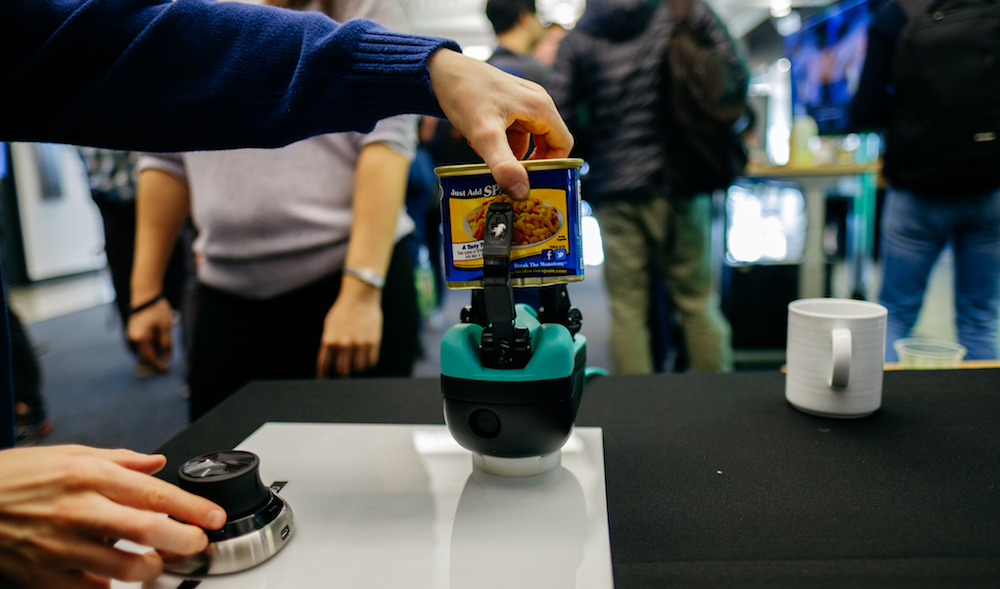

ReFlex TakkTile 2 gripper from RightHand Robotics.

Tactile sensing

NVIDIA had two demos showcasing tactile sensing, which is a missing element for commercialized robotic grippers. One demo featured a ReFlex TakkTile 2 gripper from RightHand Robotics, which recently raised $23 million for its piece-picking technology. The ReFlex TakkTile 2 is a ROS-compatible robotic gripper with three fingers. The gripper has three bending DOF and 1 coupled rotational DOFs. Sensing capabilities include normal pressure sensors, rotational proximal joint encoders, and fingertip IMUs.

The other demo, run by NVIDIA senior robotics researcher Karl Van Wyk, featured SynTouch tactile sensors retrofitted onto an Allegro robotic hand from South Korea-based Wonik Robotics and a KUKA LBR iiwa cobot. “It almost feels like a pet!” said Huang as he gently touched the robotic fingers, causing them to pull back. “It’s surprisingly therapeutic. Can I have one?”

Van Wyk said tactile sensors are starting to trickle out of research labs and into the real world. “There is a lot of hardening and integration that needs to happen to get them to hold up in the real world, but we’re making a lot of progress there. The world we live in is designed for us, not robots.”

The KUKA LBR iiwa wasn’t using any vision to sense its environment. “The robot can’t see that we’re around it, but we want it be constantly sensing and reacting to its environment. The arm has torque sensing in all of the joints, so it can feel that I’m pushing on it and react to that. It doesn’t need to see me to react to me.

“We have a 16-motor hand over with three primary fingers and an opposable thumb, so it’s like our hands. The reason you want a more complicated gripper like this is you want to eventually be able to manipulate objects in your hands like we do on an daily basis. It is very useful and makes solving physical tasks more efficient. The SynTouch sensors measure what’s going on when we’re touching and manipulating something. Keying off those sensors is important for control. If we can feel the object, we can re-adjust the grip and the finger location.”

Human-robot interaction

Huang tests a control system that enables a robots to mimic human movements. (Credit: NVIDIA)

Another interesting demo was NVIDIA’s “Proprioception Robot,” which is the work of Dr. Madeline Gannon, a multidisciplinary designer nicknamed the “Robot Whisperer” who is inventing better ways to communicate with robots. Using a two-armed ABB YuMi and a Microsoft Kinect on the floor underneath the robot, the system would mimic the movements of the human in front of it.

“With YuMi, you don’t need a roboticist to program a robot. Using NVIDIA’s motion generated algorithms, we can have engaging experiences with lifelike robots.”

You might have heard of Gannon’s recent work at the World Economic Forum in September 2018. She installed 10 industrial robot arms in a row, linking them to a single through a central controller. Using depth sensors at the bases of the robots, they tracked and responded to the movements of people passing by.

“There are so many interesting things that we could spin off in our pursuit of a general AI robot,” said Huang. “For example, it’s very likely that in the near future you’ll have ‘exo-vehicles’ around you, whether it’s an exoskeleton or an exo-something that helps people who are disabled, or helps us be stronger than we are.”

Tell Us What You Think!