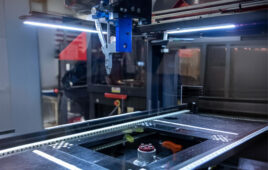

Assembly of deformable materials such as for apparel can be automated in ways other than duplicating manual sewing. Source: Createme

For more than 200 years, the sewing machine has defined how clothing is made. It mechanized the artisan’s hand, but it also anchored the industry around a single idea: thread pulled through fabric. Despite advances in robotics and automation, most garments still rely on that same logic, with human labor providing the dexterity, alignment, and exception handling for deformable materials that machines struggle to replicate.

The constraint is not a lack of effort. It is that most approaches are trying to automate a process that was never designed for machines.

Traditional automation excels at rigid, predictable tasks such as welding, assembly, and other stable material handling tasks. Fabric behaves differently. It stretches, wrinkles, collapses, and changes state throughout a task. When materials deform, robots struggle not because they cannot move precisely, but because they cannot reliably estimate material state or adjust to changing conditions.

That gap points to a broader challenge in manufacturing: building systems that can perceive, reason about contact, and adapt in real time rather than simply replaying pre-scripted motions. That is the promise of physical AI.

From deformable demos to production

Progress is real. Advances in vision, simulation, perception, and robot intelligence are moving dexterous manipulation from lab demonstrations toward deployment. But the bar for commercialization is not whether a robot can complete a task once. It is whether it can run continuously, across variation, with acceptable throughput, yield, and recovery.

These approaches are now being tested in production environments, where performance is measured in uptime, cycle time, and the engineering effort required to keep systems running. Deformable materials expose the gap between a good demo and a deployable system very quickly.

Why apparel is a demanding testbed

Apparel is one of the hardest commercial testbeds for physical AI. Few manufacturing categories combine this much physical variability—fabric type, drape, stretch, silhouette, stack-up, and construction—with this level of global scale and cost pressure.

If a system can reliably perceive, predict, and control cloth, it develops a transferable foundation for handling flexible materials more broadly. Fabric handling is not a niche problem. It is a practical test of materially aware manipulation.

The difficulty is that many efforts start by trying to automate sewing itself—preserving the hardest parts of the problem instead of removing them.

Redesign the process, don’t just automate it

A more scalable approach is to redesign manufacturing around what robots can control.

Instead of replicating needle-and-thread workflows, garments can be treated as forms to be shaped and bonded rather than pierced and stitched. This changes the structure of the problem.

In practice, the challenge is less “teach the robot to handle fabric” and more “make fabric behave in a way a robot can learn from.”

Deformable materials are inherently unstable. Learning-based manipulation only becomes reliable when the system introduces constraint and consistent reference geometry.

Single-sided access reduces occlusion and coordination complexity. Three-dimensional molds and fixtures stabilize geometry and improve observability. Purpose-built grippers provide finer control over soft, porous materials. Bonded assembly removes several constraints imposed by needles and thread.

Together, these choices create a more controlled environment in which perception, planning, and learning can generalize. This is the central point: for deformable assembly, process design and intelligence are inseparable.

These systems work not because AI is layered onto existing workflows, but because robotics, joining methods, and learning-based control are designed as a single, integrated system.

Bonding also introduces a different kind of flexibility. Adhesive patterns can encode stretch, durability, and performance directly into the joint. In effect, the joint becomes programmable, not just mechanical. With closed-loop feedback, placement and curing can adjust to the material in front of the system rather than an idealized baseline. Each operation becomes both a manufacturing step and a source of data.

Editor’s note: Physical AI will be among the topics discussed at the Robotics Summit & Expo this month in Boston. Register now to attend.

When learning compounds in production

In this model, capability comes less from hard-coded motion and more from learned behavior. Skills such as alignment, flattening, and placement can transfer across products and materials. Over time, performance improves through data rather than repeated retooling.

This does not eliminate the need for hardware or process discipline. But it changes how systems adapt. Instead of rebuilding workflows for each variation, systems can generalize within defined constraints.

That shift has implications for manufacturing architecture. When improvement is software-driven, production can become more responsive to demand, with shorter lead times and less reliance on large, fixed production runs.

Robotic handling of deformables extends beyond apparel

Apparel is a useful proving ground, but the implications extend well beyond clothing. The same challenges appear in automotive interiors, medical textiles, furniture, and aerospace composites, where variable materials, complex geometries, and tight tolerances are common.

Deformable assembly is not a niche application. It is a foundational capability for industries working with soft goods, technical textiles, laminates, and other variable materials.

From demonstration to production reality

The field is now being evaluated on production terms: uptime, yield, cycle time, and the effort required to keep systems running. That transition is necessary. It is what turns Physical AI from an experimental approach into a practical one.

The next phase of automation will be defined not only by faster machines but by systems that can estimate material state, adapt to variation, and improve with use.

The next wave of manufacturing will not be won by automating legacy processes, but by redesigning them for intelligence.

About the author

Cam Myers, founder and CEO of CreateMe.

Cam Myers is founder, CEO, and a board member of CreateMe, which is building the infrastructure for automated manufacturing of soft materials, starting with apparel. The company replaces traditional sewing with digitally bonded construction powered by robotics, proprietary adhesives, and AI-driven manufacturing systems, built on the belief that the “future of fashion is bonded.”

Myers holds 25 patents in apparel automation technologies developed at CreateMe.

Prior to founding the company, he was on the founding executive team of Group Commerce, a venture-backed ecommerce platform ultimately acquired by Blackhawk Network. Myers previously held roles at DoubleClick and Allen & Co.

Tell Us What You Think!