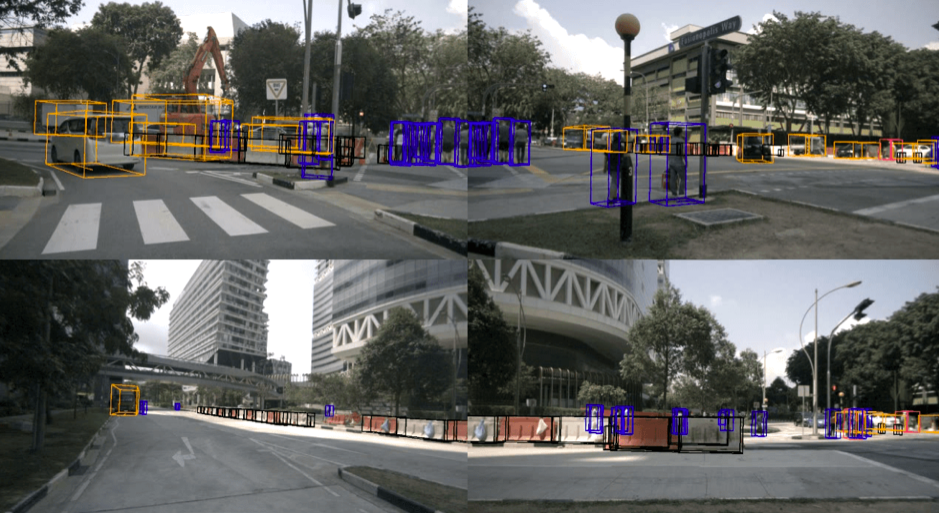

nuScenes data set was created by nuTonomy and Scale. (Credit: nuScenes)

Data is the key to developing reliable self-driving cars. But it’s about having the right data, not necessarily the most data. And nuTonomy, a Boston-based self-driving vehicle company recently purchased by Delphi for $450 million, believes it has the right data to allow researchers across the world to develop safe autonomous vehicles.

nuTonomy released a self-driving dataset called nuScenes that it claims is the “largest open-source, multi-sensor self-driving dataset available to public.” According to nuTonomy, other self-driving datasets such as Cityscapes, Mapillary Vistas, Apolloscapes, and Berkeley Deep Drive focused only on camera-based object detection.

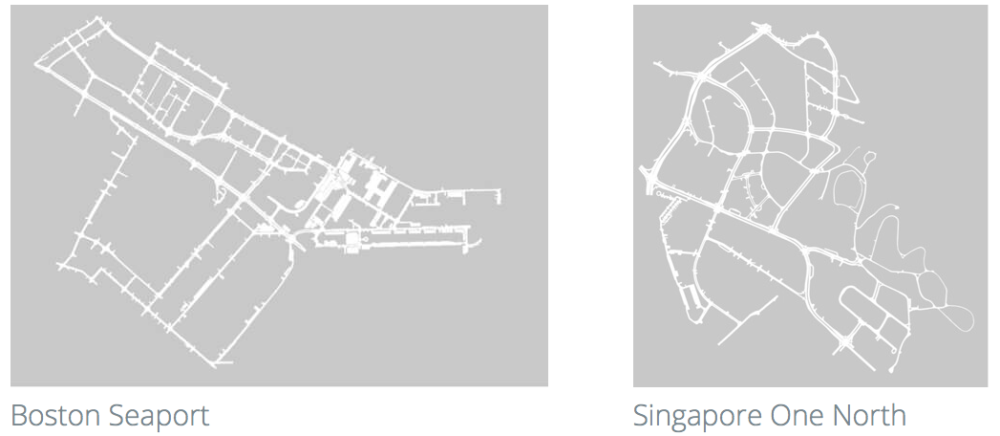

Collecting 1,000 scenes from Boston and Singapore, two cities that are home to nuTonomy self-driving car tests, dense traffic and challenging driving environments, the nuScenes dataset includes 1.4 million camera images, 400,000 LIDAR sweeps, 1.3 million radar sweeps and 1.1 million object bounding boxes in 40k keyframes.

All of this data has been meticulously labeled with Scale’s Sensor Fusion Annotation API, which taps AI and teams of humans for data annotation. All objects in the nuScenes dataset come with a semantic category, as well as a a 3D bounding box and attributes for each frame they occur in. Compared to 2D bounding boxes, this allowed nuTonomy to accurately infer an object’s position and orientation in space. Here’s the list of annotations available with the launch of nuScenes.

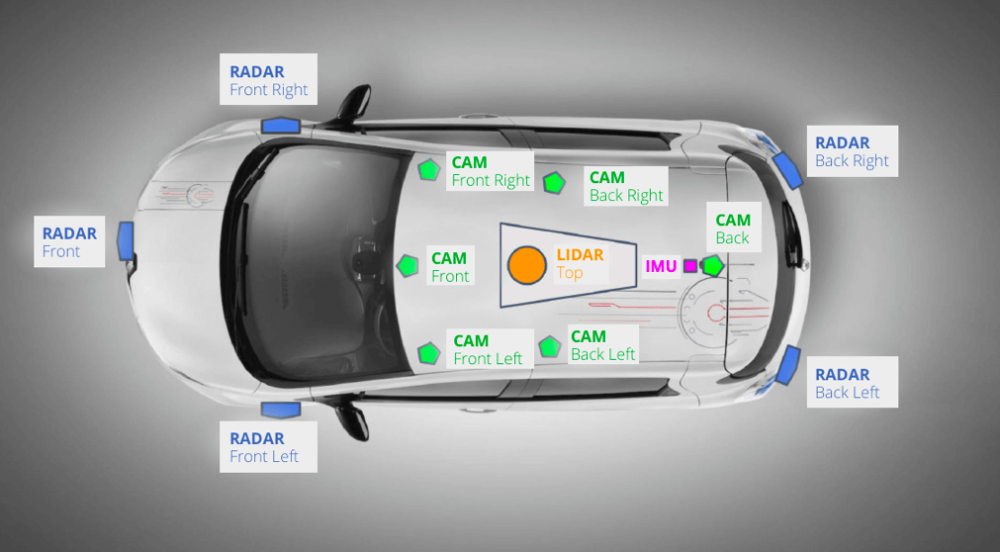

The nuScenes dataset was captured by two nuTonomy vehicles with this sensor suite. (Credit: nuScenes)

Related: UC Berkeley open-sources BDD100K self-driving dataset

The nuScenes data was captured using a combination of six cameras, one lidar, five radars, GPS, and an inertial measurement sensor. nuTonomy used two Renault Zoe cars with identical sensor layouts to drive in Boston and Singapore. The data was gathered from a research platform and is not indicative of the setup used in nuTonomy-Aptiv products.

nuTonomy says the driving routes in Boston and Singapore were carefully chosen to capture challenging scenarios. “We aim for a diverse set of locations, times and weather conditions. To balance the class frequency distribution, we include more scenes with rare classes (such as bicycles).” Below is an image of the respective driving routes. nuScenes also has an online tool with sample scenes that have been captured.

“We’re proud to provide the annotations … as the most robust open source multi-sensor self-driving dataset ever released,” said Scale CEO Alexandr Wang. “We believe this will be an invaluable resource for researchers developing autonomous vehicle systems, and one that will help to shape and accelerate their production for years to come.”

“We’re proud to provide the annotations … as the most robust open source multi-sensor self-driving dataset ever released,” said Scale CEO Alexandr Wang. “We believe this will be an invaluable resource for researchers developing autonomous vehicle systems, and one that will help to shape and accelerate their production for years to come.”

Related: MapLite enables autonomous vehicles to navigate unmapped roads

Starting from 2019, nuScenes will organize challenges on object detection and other computer vision tasks to provide a benchmark to measure performance and advance the state-of-the-art. nuScenes can also be used for 2D object detection. Using the known camera calibration parameters, it can project 3D object bounding boxes into any of the cameras and therefore also provide boxes for 2D object detection.

Scale, whose autonomous vehicle customers also include Lyft, General Motors through its Cruise business unit, Zoox, Nuro, has raised a total of $22.7 million to date. It has labeled more than 200,000 miles of autonomous driving data for clients that include Lyft, Voyage, General Motors, Zoox, and Embark since its founding in 2016.

Tell Us What You Think!