As robots are deployed in increasingly complex and dynamic environments and applications, programming them has become equally challenging and time-consuming. Natural tasking is an approach to robot control that can reduce the difficulty of programming robotic systems.

An application might require the coordination of multiple robots in a single workspace, the integration of moving parts tracked by vision systems, or the completion of complex tasks such as welding. The more complex the application, the harder is to program using conventional methods of direct motion control. This can delay the launch of innovative tools and reduce the potential productivity, quality, and safety benefits of using robots.

Natural tasking allows the user to specify what the robot should do rather than how to do it. Jeff Sprenger, director of business development at Bedford, Mass.-based Energid Technologies, spoke with The Robot Report about what natural tasking is and how it can be used to simplify programing and enable more sophisticated robotics applications.

Why do you call it “natural tasking?”

Consider how humans move their arms, wrists, and hands to grasp objects and then place them. Depending on the object, such as a cup, pen, ball, or block, there are multiple ways to grasp, but we do it in a way that feels natural and comfortable. We do this to minimize movement and avoid the over-rotation of our wrist or awkward placement of our elbow, as well as to take advantage of the symmetry of the object.

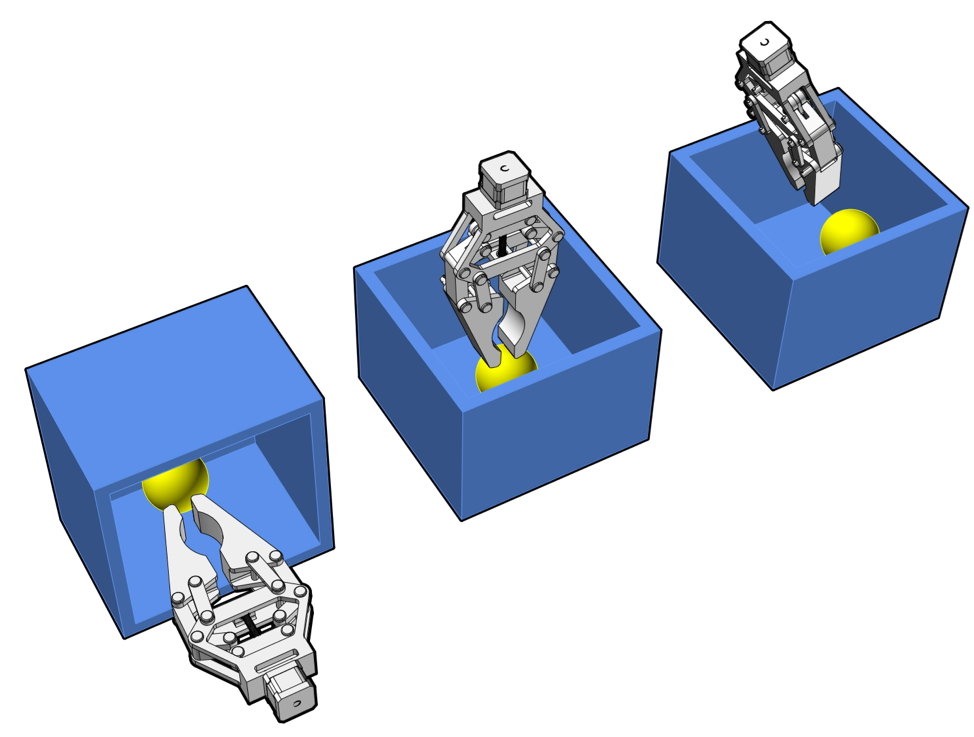

For instance, a glass can be grasped from different angles around its outer surface. Each grasp results in a different set of joint angles or poses. Natural tasking uses the multiple possible pose solutions and chooses one that minimizes metrics such as kinetic energy and avoids joint limits and obstacles. The result is a natural motion that is optimized mathematically for efficiency.

There are three approaches to grasping this sphere. The control system uses a 3-DOF constraint (position only) and optimizes the approach by automatically rotating the gripper to avoid collision with the box. Source: Energid

Natural tasking sounds simple, but how does it work?

The idea is to reduce complexity through abstraction, which reduces a high-dimension problem to an easier-to-solve, lower-dimension problem. If you try to move your arm by concentrating on the individual movements and joint angles of the shoulder, elbow, and wrist, the problem becomes complex.

Instead, we guide the hand using visual or tactile feedback and depend on our motor and sensory systems to adjust our reach and grasp using a comfortable pose. We use “proprioception” — the body’s ability to sense its location, movements, and actions — along with pain sensors to guide our movements and keep us from over-extending or over-rotating.

If you consciously attempt to consider every possible change in every available degree of freedom [DOF], the problem becomes much more complex. There are simply too many changes that need to be made.

Alternatively, you can break the problem down into different layers of abstraction: position and velocity control at the joint level; object recognition for location and orientation; end-effector guidance to the target; and obstacle avoidance for the arm, the end effector, and any attached objects.

For robotic control, the separation of the task and motion-control layers allows the engineer to abstract the position and orientation of a part or any other object in the environment. If the task is specified by a moving reference frame attached to the part, then the robot can grasp that part anywhere in the workspace. A computer vision tracking system can update the position of the part in real time, and the natural task is just to grasp the part.

That makes sense, but how is it accomplished?

Natural tasking exploits a robot’s kinematic redundancy by matching its task space with the constraint space. For example, if we need a six-axis robot to apply adhesive or perform a weld, the application only requires five degrees of constraint, with the tool itself rotating along its long axis, like a pen. If we over-constrain the robot by forcing all six DOF, the result can be unnatural and inefficient motion.

By matching the task and constraint space, we instead have one DOF that can be exploited to find the optimal path and avoid collision. Natural tasking uses software, sensors, and tracked markers in the environment to automatically calculate the robot’s movement, independent of its position and orientation.

The motion layer computes the inverse kinematics, defining the set of joint angles that locates the end effector in the desired position and orientation. To move the end effector from Point A to Point B, the motion control computes a viable path, continually performing collision detection — for both the robot itself or other objects in the environment — along the path while in motion.

By leaving the automated collision avoidance to the motion controller, the engineer can focus on higher-level tasks.

What are some common automation challenges that natural tasking can solve?

Nearly any application that involves a high number of DOF is a good candidate for natural tasking. That could be the coordination of multiple six-axis robotic arms working on a single task, or a six-axis robot on a mobile platform such as a rail or motorized car.

Robotic welding is a good example of a task that can be performed using multiple robot arms, with one arm controlling the welding tool while the others hold the parts to be welded. If each robot has seven DOF, the kinematic solver has to determine how best to use the 21 DOF across all three robots to adjust the part and tool orientation and position to meet the motion constraints of the tool along the weld path.

Another example is a sophisticated production line in which multiple robot arms work in coordination to combine a set of parts into a single assembly, which is defined using a CAD system. The problem is stated at a higher level, with the control system left to figure out how to position and move the arm to manipulate objects in the environment.

The robot must be able to recognize the parts in arbitrary order and orientation on multiple trays, attach the appropriate grippers, grasp and hold parts together during assembly, test the fit with visual inspection, and then continue to the next steps in assembly. Assembling multiple parts can include insertion of one part into another using force feedback to ensure a correct fit.

What are the advantages of natural tasking for this application?

Programming the coordination of these robots can take weeks if it is described using motion primitives that move a set of joints on the robot arm. If the company later needs to automate a similar assembly with somewhat different parts, then that long, complex programming task has to be repeated.

With natural tasking, the assembly is described in high-level terms that move and combine parts regardless of location or orientation. It handles real-time object collision avoidance along the way. Changing the assembly using a different but similar part is a matter of a few hours to change the task and retest in simulation and then directly on the physical robot hardware.

How can a robot programmer take this approach?

The natural tasking approach relies on implementation of the following layers of control:

-

- Motor/servo control, which ensures that the robot actuators are moving to the desired position at the right time by controlling the current supplied to the motor using position encoding feedback.

- Motion control, which moves a combination of robot joints to achieve a required pose at a specified tool offset at a specified time.

- Task control, which decomposes the task into a series of motion primitives. The tasks can be programmed using a scripting language rather than C++. Motion primitives include coordinated joint motions, end-effector motions — linear and circular, based on the world coordinate frame or link frame — and tool paths, as well as path planning in the known environment. These motions can be defined relative to some object, which allows the system to handle moving parts or targets.

Natural tasking allows the programmer to describe the desired start and end points of the end effector relative to objects in the environment. Meanwhile, the automated control system uses optimization to find the best path choosing from multiple solutions.

The human describes the task, the motion layer breaks that down into motion primitive with adjustments in real time according to environment changes, and the motor layer drives the robotic actuators to their desired position and velocity.

Each layer operates at a different update rate, such as 10 kHz for the motor, 500 Hz for the motion, and 10-0.5 Hz for each task, allowing each layer to react to different types of disturbances to the system.

Is this something robotics developers can do on their own?

A robotics system developer can do this if the correct abstractions are provided in the control software. Abstractions for the robot and its bounding volumes, as well as geometric representations for objects entering and leaving the workspace, are important. Recognizing objects and tracking their position and orientation while modeling their 3D spatial bounds is also key.

Motion-control software such as Energid’s Actin SDK specializes in providing these abstractions that facilitate natural tasking. An important quality of such a system is to allow the replacement of one robot with another robot with different kinematic representation and still be able to generate a solution without having to recode. Each robot, depending on degrees of freedom and kinematic redundancy, has its own natural tasking representation.

Taking advantage of that results in optimized solutions, smoother movement, and the ability to abstract the motion control problem to allow programming at the problem level rather than the control level.

Tell Us What You Think!