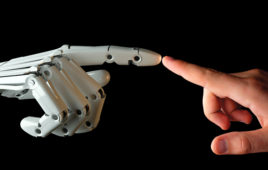

During his Keynote speech at the 2014 NI Week, founder Jeff Kodosky posed some interesting challenges to our assumptions about programming languages. In his talk about the future of system design, he raised the problem of how computers think versus how humans think.

Computers are binary. Therein lies the problem. From the early days of relay operated logic and giant vacuum tube computers like Eniac, computing has been about modeling simple 0’s and 1’s in order to solve real world problems.

Initially most problems presented for solution on a computer were math problems. These problems were posed in the form of loading a program through a compiler language, presenting some data to be processed, and waiting for the answer to be returned. For those who remember running code written on “punch cards”, the deck would be dropped off for processing at a computer center and the program printout would be picked up the next day.

Characteristically, all early computing problems were of the “batch process” type. The Eniac 1 processed the equations of motion to create the artillery tables for the U.S. Army. Turing’s team in the UK simulated complex mechanisms to break the encryption codes created by the German military to obscure their messages.

More recently in the 1990’s batch processing of oil field exploration data involved applying Fast Fourier Transform calculations to understand the geological formations under the Earth’s surface. These processes were done with unique hardware and software and took many hours to complete. Today FFT’s are either chips or software and perform these calculations on the fly in near real time.

In many applications, unique hardware systems had to be crafted to provide the platform of execution to solve a specific problem, like Computer Numerically Controlled machining. A specific descriptive language, G-Code, was created based on manual machining conventions, in order to facilitate use by machinists.

This has been the evolution of machine control as a sub-set of general computing. There needs to be a major distinction in our thinking about machine control. Machines are time bounded, they must have a repeatable, recursive program with a limited list of inputs, outputs, variables and mathematical processes. It is the essence of machine control to reliable and repeatable. If not, manufacturing would become subject to variations and defects that are unacceptable.

Computers began to make a gradual transition from batch processing to “real time” as solid state electronics made the internal operation of relays and vacuum tubes faster and more efficient. As the true “digital revolution” began to take hold 25 years ago, processor gate densities and speeds have evolved rapidly from the 25 megaherz 486 processor running DOS and Windows 3.1.

This makes hardware systems relatively transparent with respect to executing machine control tasks. This places much more emphasis on operating systems and software for efficiency and stability.

Tell Us What You Think!