Formant says it uses NVIDIA’s Jetson with its cloud platform for next-generation robotics controls. Source: Formant

Robotics began at the edge. Early robots were massive, immobile machines operating on factory floors, with plenty of space for storing what little data they required locally. Over recent years, however, robots have left the factory floor and are moving around in an increasing number and variety of environments. These robots are no longer refrigerator-sized automata punching out widgets.

Now, rather than worrying about workers bumping into robots, we have to worry about robots bumping into workers. The new unstructured environments that autonomous systems are venturing into are invariably fraught with obstacles and challenges. Humans can assist, but we need data, lots of it, and in real-time. Companies like Formant have used cloud technology to meet those needs. We’ve enabled companies to observe, operate, and analyze this new wave of robotic fleets remotely and intuitively through the use of our cloud platform.

This notion, however, of everything being pushed to the cloud all of the time, is beginning to come into question. Using the integrated GPU cores in NVIDIA’s Jetson platform, we can swing the pendulum back in the direction of the edge and reap its advantages. The combination of GPU optimized edge data processing paired with Formant’s observability and teleoperation platform can creates an efficient command and control center right out of the box.

When using Jetson devices, Formant users can now enable real-time video and image analytics in the cloud and perform PII scrubbing at the edge, ultimately sustaining more reliable connections and better privacy protections. This also allows for much greater cloud/edge portability, as the very same algorithms can run in both places. This hybridized model allows one to do away with “one-size-fits-all” solutions and opt for balance between one’s in-cloud and on-device operations.

Find balance with cutting-edge encoding

An optimal teleoperation experience requires the proper balance between latency, quality, and computational availability. In the past, striking such a balance wasn’t an easy task. In essence, for each single quality one sought to prioritize, it would come at the expense of the other two. By virtue of applying Formant’s tooling and the portability of NVIDIA’s DeepStream SDK, users can re-adjust those balances as needed in order to optimize data management to their specific use case.

Source: Formant

The most immediately-useful capability we gain by using Jetson is hardware-accelerated video encoding. When Formant detects that you are using a Jetson or compatible device, it unlocks the option to automatically perform H.264 encoding at the edge. This enables high-quality transmission of full-motion video with substantially diminished bandwidth requirements, lower latency, and lower storage requirements if buffering the data for later retrieval.

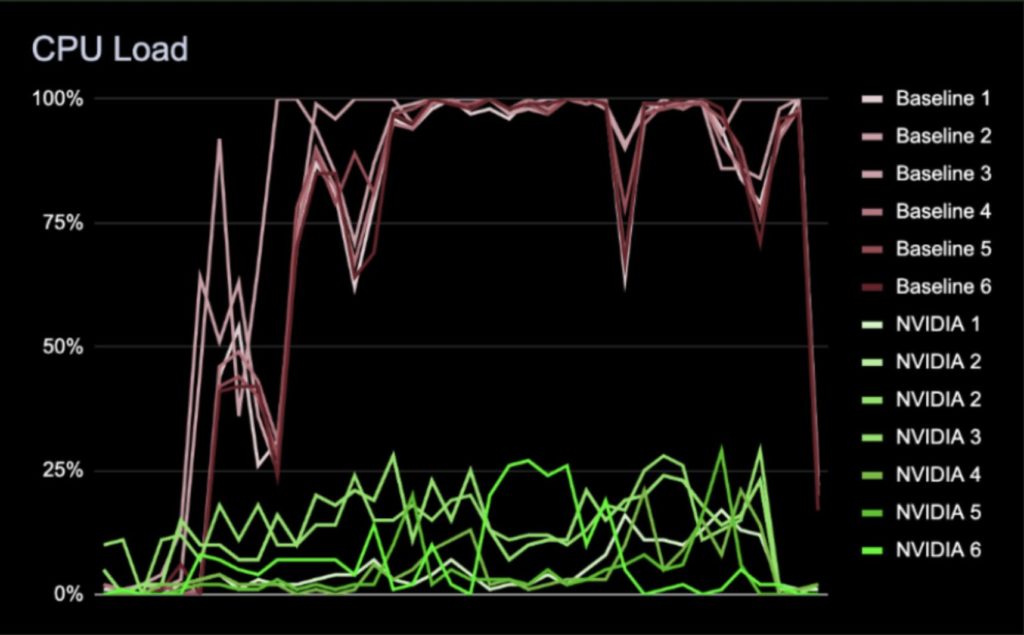

When it comes to measuring the performance of a teleoperation system, latency is one of the most important criteria to consider. This is even more so the case when dealing with video encoding, an exceedingly resource-intensive process that can easily introduce latency if pushed to the limit.

In our tests, 1080p resolution at 30 frames per second pegged all 6 of our cores at 100% utilization when not using hardware acceleration. This then caused a fair amount of latency to be introduced to the pipeline. However, when our Jetson implementation is activated, the average CPU utilization for the system drops to below 25%. This not only improved latency significantly, it also freed up the CPU for other activities.

Source: NVIDIA, Formant

Jetson could lead to the future of hybrid robotics

With Formant, one has the ability to fine-tune and balance what operations occur at the edge and in the cloud. We think this flexibility is a huge milestone in the business of robotics. Just imagine your chief financial officer saying that your LTE bill is way too high, your engineering team deciding that they need to use cheaper devices and smaller batteries, or that new data transmission and sovereignty regulations have just been passed. With the ability to determine what is done at the edge and what is done on the cloud, these otherwise heavy lifts become as simple as checking a box and swinging the pendulum.

At the moment, these are crucial decisions, and the striking of the edge/cloud balance must be decided by engineers. Formant provides these interfaces to let you tune the system easily. Looking ahead, we envision an automated dynamic “load balancing” between the edge and the cloud. You essentially will define rules and budgets to optimize around.

For example, when your robot is connected to Wi-Fi, power, and idle, you could automatically use this spare time and power to leverage the GPU for semantic labeling and data enrichment, then upload the data to the cloud while the bandwidth is cheap.

There are clear reasons to choose either the cloud or the edge for your computation. It’s equally evident that this line will continue to shift and evolve.

Related content: The Robot Report Podcast: Tele-operating Spot, Veo on manufacturing challenges, Amazon goes to the mall

About the author:

About the author:

Jeff Linnell is founder and CEO of Formant. He was previously head of product-robotics at Google and director of robotics at X, “the moonshot factory.”

Tell Us What You Think!