|

Listen to this article

|

A Tesla car demonstrates self-driving mode. Source: Tesla

In Musk, Walter Isaacson’s biography of Elon Musk, we learned how Tesla planned to use artificial intelligence in its vehicles to offer its long-awaited full self-driving mode. Year after year, the company has promised its owners Full Self Driving, but FSD remains in beta. That doesn’t stop Tesla from charging $199 per month for it, though.

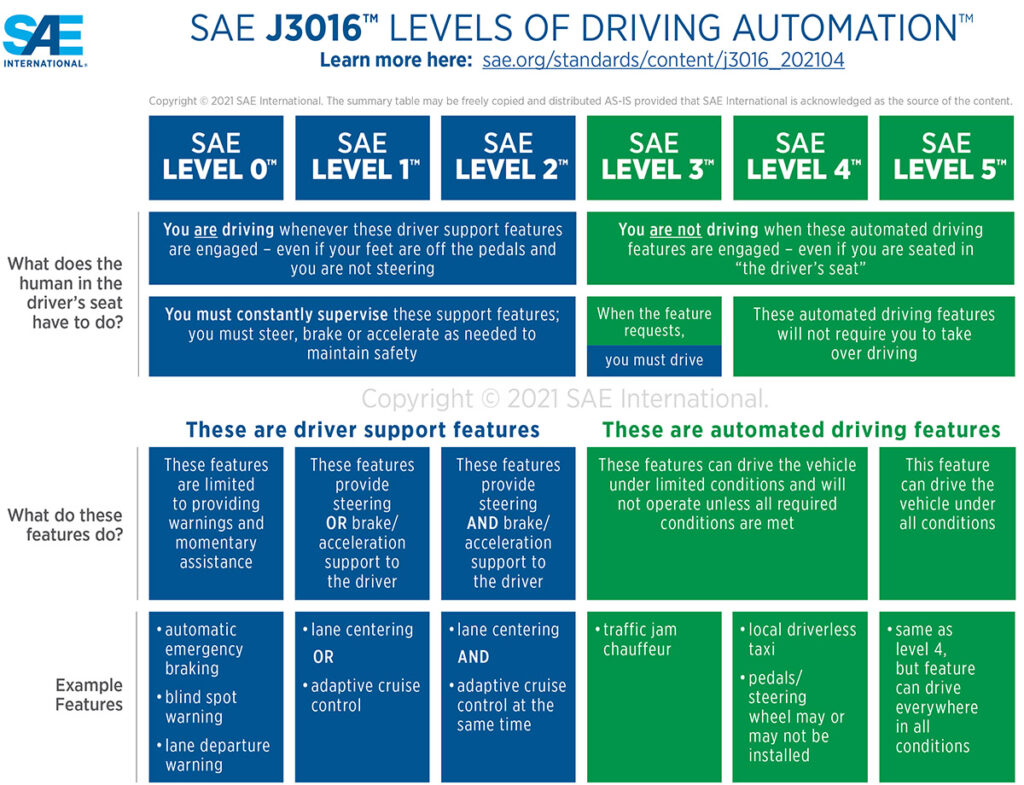

SAE International (formerly the Society of Automotive Engineers) defines Level 4 autonomy as a hands-off-the-steering-wheel mode in which a vehicle drives itself from Point A to Point B.

The only thing more magical, L5, is no steering wheel. No gas or brake pedal, either. L5 has been achieved by several companies but only for shuttles. One example was Olli, a 3D-printed electric vehicle (EV) that was a highlight of IMTS 2016, the biggest manufacturing show in the U.S.

However, Olli’s manufacturer, Local Motors, ran out of money and closed its doors in January 2022, a month after one of its vehicles that was being tested in Toronto ran into a tree.

SAE J3016 levels of autonomous driving. Click here to enlarge. Source: SAE International

Edge cases uncover challenges

The traditional approach to L4 self-driving cars has been to program for every imaginable traffic situation with an “If this, then that” nested algorithm. For example, if a car turns in front of the vehicle, then drive around—if the speeds allow it. If not, stop.

Programmers have created libraries of thousands upon thousands of situations/responses … only to have “edge cases,” as unfortunate and sometimes disastrous events keep maddeningly coming up.

Teslas, and other self-driving vehicles, notably robotaxis, have come under increasing scrutiny. The National Highway Traffic Safety Administration (NHTSA) investigated Tesla for its role in 16 crashes with safety vehicles when the company‘s vehicles were in Autopilot or Full Self Driving mode.

In March 2018, an Uber robotaxi with an inattentive human behind the wheel ran into a person walking their bike across a street in Tempe, Ariz., and killed her. Recently, a Cruise robotaxi ran into a pedestrian and dragged her 20 feet.

Self-driving car companies attempts to downplay such incidents, suggesting that they are few and far between and that autonomous vehicles (AVs) are a safe alternative to humans who kill 40,000 every year in the U.S. alone, have been unsuccessful. It’s not fair, say the technologists. It’s zero tolerance, says the public.

Musk claims to have a better way

Elon Musk is hardly one to accept a conventional approach, such as the situation/response library. A creator of the “move fast and break things” movement, now the mantra of every wannabe disruptor startup, said he had a better way.

The better way was learning how the best drivers drove and then using AI to apply their behavior in the Tesla’s Full Self Driving mode. For this, Tesla had a clear advantage over its competitors. Since the first Tesla rolled into use, the vehicles have been sending videos to the company.

In “The Radical Scope of Tesla’s Data Hoard,” IEEE Spectrum reported on the data Tesla vehicles have been collecting. While many modern vehicles are sold with black boxes that record pre-crash data, Tesla vehicles goes the extra mile, collecting and keeping extended route data.

This came to light when Tesla used the extended data to exonerate itself in a civil lawsuit. Tesla was also suspected of storing millions of hours of video—petabytes of data. This was revealed in Musk’s biography, which said he realized that the video could serve as a learning library for Tesla’s AI, specifically its neural networks.

From this massive data lake, Tesla employees identified the best drivers. From there, it was simple: Train the AI to drive like the good drivers. Like a good human driver, Teslas would then be able to handle any situation, not just those in the situation/response libraries.

Is Tesla’s self-driving mission possible?

Whether it is possible for AI to replace a human behind the wheel still remains to be seen. Tesla still charges thousands a year for Full Self Driving but has failed to deliver the technology.

Tesla has been passed by Mercedes, which attained Level 3 autonomy with its fully electric EQS vehicles last year.

Meanwhile, opponents of AVs and AI grow stronger and louder. In San Francisco, Cruise was practically drummed out of town after an October incident in which it allegedly failed to show the video from one of its vehicles dragging a pedestrian who was pinned underneath the car for 20 feet.

Even some stalwart technologists have crossed to the side of safety. Musk himself, despite his use of AI in Tesla, has condemned AI publicly and forcefully, saying it will lead to the destruction of civilization. What do you expect from a sci-fi fan, as Musk admits to being, but from a respected engineering publication?

In IEEE Spectrum, former fighter pilot turned AI watchdog Mary L. “Missy” Cummings warned of the dangers of using AI in self-driving vehicles. In “What Self-Driving Cars Tell Us About AI Risks,” she recommended guidelines for AI development, using examples of AVs.

Whether situation/response programming constitutes AI in the way the term is used can be debated, so let us give Cummings a little room.

Learn from Agility Robotics, Amazon, Disney, Teradyne and many more.

Learn from Agility Robotics, Amazon, Disney, Teradyne and many more.

Self-driving vehicles have a hard stop

Whatever your interpretation of AI, autonomous vehicles serve as examples of machines under the influence of software that can behave badly—badly enough to cause damage or hurt people. The examples range from understandable to inexcusable.

An example of inexcusable is an autonomous vehicle running into anything ahead of it. That should never happen. No matter if the system misidentifies a threat or obstruction, or fails to identify it altogether and, therefore, cannot predict its behavior, if a mass is detected ahead and the vehicle’s present speed would cause a collision, it must slam on the brakes.

No brakes were slammed on when one AV ran into the back of an articulated bus because the system had identified it as a “normal” — that is, shorter — bus.

Phantom braking, however, is totally understandable—and a perfect example of how AI not only fails to protect us but also actually throws the occupants of AVs into harm’s way, argued Cummings.

“One failure mode not previously anticipated is phantom braking,” she wrote. “For no obvious reason, a self-driving car will suddenly brake hard, perhaps causing a rear-end collision with the vehicle just behind it and other vehicles further back. Phantom braking has been seen in the self-driving cars of many different manufacturers and in ADAS [advanced driver-assistance systems]-equipped cars as well.”

To back up her claim, Cummings cited a NHSTA report that said rear-end collisions happen exactly twice as often with autonomous vehicles (56%) than with all vehicles (28%).

“The cause of such events is still a mystery,” she said. “Experts initially attributed it to human drivers following the self-driving car too closely (often accompanying their assessments by citing the misleading 94% statistic about driver error).”

“However, an increasing number of these crashes have been reported to NHTSA,” noted Cummings. “In May 2022, for instance, the NHTSA sent a letter to Tesla noting that the agency had received 758 complaints about phantom braking in Model 3 and Y cars. This past May, the German publication Handelsblatt reported on 1,500 complaints of braking issues with Tesla vehicles, as well as 2,400 complaints of sudden acceleration.”

Mercedes did not pass up Tesla. A fair and honest comparison of these two systems shows a vast gap between the capabilities of each one, and Tesla is the clear leader. The Level 3 achieved by Mercedes is smoke and mirrors with a bunch of marketing BS.