|

Listen to this article

|

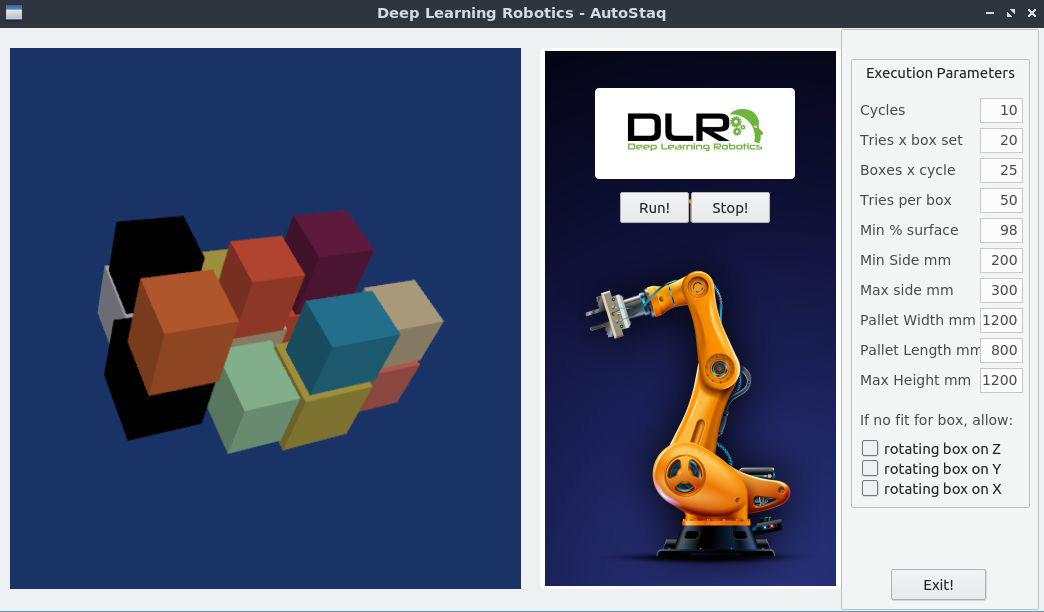

DLRob’s Autostaq allows robots to pack and stack a wide range of objects autonomously. | Source: DLRob

Deep Learning Robotics (DLRob), and AI and robotics technology company, announced a new feature for its vision-based controller that was released earlier this year. This feature, called Autostaq, allows robots to autonomously pack and stack a wide range of objects with little setup time.

DLRob’s AI controller can enable robots to learn from human demonstrations. Now, with the latest feature, the controller has the ability to self-train using a unique combination of generated synthetic data and real performance data.

Generating synthetic data and merging that data with the controller’s own real-world performance data allows it to achieve remarkable adaptability and accuracy in handling diverse objects and placing them in optimal locations with little or no setup time. This means there is no user demonstration needed.

“We are thrilled to introduce this new feature of our vision-based robot controller, which marks a major milestone in the field of AI-powered robots and automation,” Deep Learning Robotics’ CEO Carlos Benaim said. “By leveraging our self-training approach, the controller gains an unprecedented level of proficiency, enabling robots to pack and stack virtually anything by finding optimal locations for each of the objects identified in the scene. This breakthrough has the potential to transform various industries, from logistics and warehousing to manufacturing and beyond.”

The robot controller’s software uses machine learning algorithms to allow robots to learn by observing and mimicking human actions. The software is designed with a user-friendly interface so that anyone with any level of robotic knowledge can teach the robots new tasks.

The software can handle a wide range of robots and applications, including industrial manufacturing, home automation and more. It uses plug-and-play technology, which DLRob hopes will decrease implementation time.

DLRob was founded in 2015 and is based in Ashdod, HaDaron, Isreal. It aims to change how robots are programmed and operated in both structured and unstructured environments.

This advancement sounds great. Is the vision system using a point cloud modeling to generate the robot off set coordinates for pick positions?