The Robotics Summit and Showcase is just a couple months away. Find out all about our agenda here and register by April 20 for a 20% discount to learn from the best in the robotics industry.

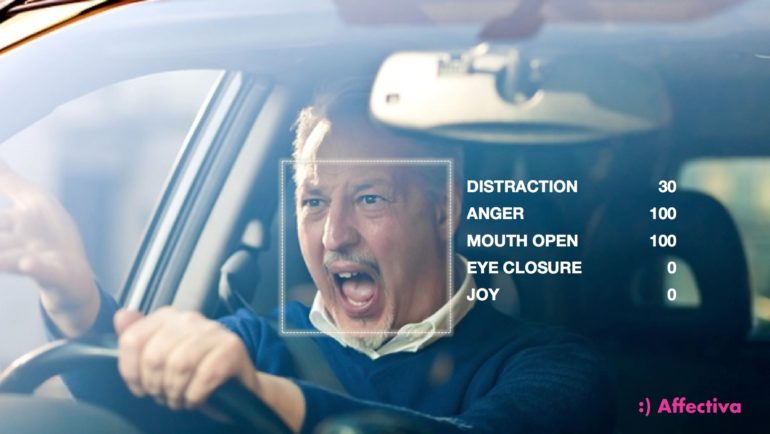

Affectiva Automotive AI hopes to improve driver safety. (Credit: Affectiva)

Artificial intelligence (AI), to date, has helped autonomous vehicles mainly by monitoring the world around them. As we learned from the fatal Uber self-driving car crash, unfortunately, the technology is not perfect.

Affectiva, a Boston-based developer of emotional AI, is now turning to AI to help monitor what’s happening on the inside of cars. The Affectiva Automotive AI monitors in real time the emotional and cognitive state of a vehicle’s occupants.

To do so, the Affectiva Automotive AI uses a combination of RGB cameras and near-IR cameras to track facial expressions and head movements. The system call also monitor one’s voice. It measures facial expressions and emotions such as joy, anger and surprise, as well as vocal expressions of anger, arousal and laughter. The Affectiva Automotive AI also indicates when a driver is drowsy by tracking yawns, eye closure and blink rates.

The Affectiva Automotive AI compiles all this data and compares it to AI models the company built that indicate how a driver or passenger feels. According to Affectiva, this allows OEMs and Tier 1 suppliers to build advanced driver monitoring systems (DMS).

For semi-autonomous vehicles, the Affectiva Automotive AI will focus on monitoring drivers to increase safety and facilitate the handoff of controls. More than 1,000 injuries and nine fatalities are caused everyday by distracted driving in the U.S. And up to 6,000 fatal crashes each year may be caused by drowsy drivers.

If and when fully autonomous vehicles become a reality, Affectiva could shift its focus to personalizing the in-cabin experience.

“We have built industry-leading Emotion AI, using our database of more than 6 million faces analyzed in 87 countries,” said Dr. Rana el Kaliouby, CEO and co-founder, Affectiva. “We are now training on large amounts of naturalistic driver and passenger data that Affectiva has collected, to ensure our models perform accurately in real-world automotive environments. With a deep understanding of the emotional and cognitive states of people in a vehicle, our technology will not only help save lives and improve the overall transportation experience, but accelerate the commercial use of semi-autonomous and autonomous vehicles.”

Affectiva Automotive AI Partners

Affectiva already has partnerships with Autoliv, a Swedish supplier of automotive safety products, and Renovo, a California-based self-driving taxi startup. Affectiva said it has partnerships with other unnamed companies, too.

“Our Learning Intelligent Vehicle (LIV) was developed to shape consumer acceptance of autonomous vehicles by building two-way trust and confidence between human and machine,” said Ola Boström, Vice President of Research, Autoliv, Inc. “Supported by Affectiva’s AI, LIV is able to sense driver and passenger moods, and interact with human occupants accordingly. As the adoption and development of autonomous vehicles continues, the need for humans to trust that they’re safe at the hands of their vehicle will be critical. AI systems like Affectiva’s that allow vehicles to really understand occupants, will have a huge role to play, not only in driver safety but in the future of autonomy.”

Affectiva’s emotional AI is built on computer vision, speech science and deep learning. Affectiva launched a voice analysis tool in 2017 for AI assistants and social robots. There are other companies building emotional AI, of course, including EMOSpeech, Vokaturi, and Eyeris. Affectiva hopes, however, it’s entry into the automotive industry will be a differentiator.

“Imagine how much better your robo-taxi experience would be if the vehicle taking you from Point A to Point B understood your moods and emotions,” said Christopher Heiser, co-founder and CEO, Renovo. “Affectiva Automotive AI is integrated into AWare, our OS for automated mobility, and running on our fleet in California today. It is a powerful addition to the AWare ecosystem by providing a feedback loop between a highly automated vehicle and its occupants. Companies building automated driving systems on AWare can use this to tune and potentially provide real-time customization of their algorithms. We believe this real-time passenger feedback capability, combined with aggregate analytics on the occupants’ emotional and cognitive states, will be fundamental to technology developers and operators of automated mobility services.”

Tell Us What You Think!