As artificial intelligence achieves greater importance as a competitive advantage and efficiency driver, the race to usable data is heating up. Yet according to Dimensional Research, 8 out of 10 AI projects fail, and 96% of those failures are due to the lack of data quality. Data quality is clearly paramount for a successful AI…

As artificial intelligence achieves greater importance as a competitive advantage and efficiency driver, the race to usable data is heating up. Yet according to Dimensional Research, 8 out of 10 AI projects fail, and 96% of those failures are due to the lack of data quality.

Data quality is clearly paramount for a successful AI project, but how difficult is it to achieve?

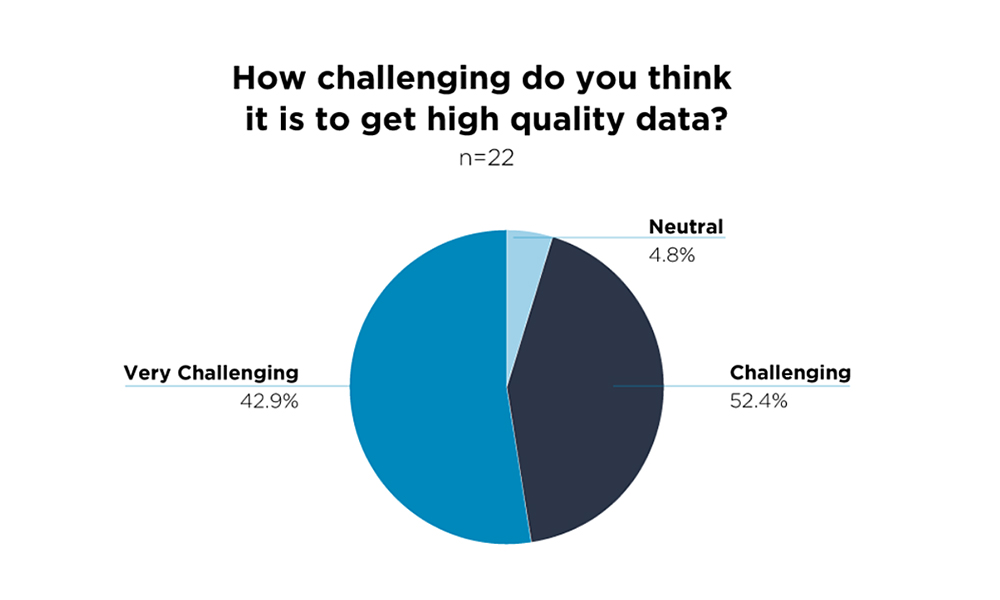

Over 95% of those we surveyed during a recent webinar said they believed it was challenging or very challenging to get high-data quality.

If it’s truly that difficult to get good data, what’s the most effective path to the highest-quality possible?

Weighing Your Options

If you’re conducting data labeling work in-house and (more often than not) having it done by the same data science team creating the AI algorithms, you can certainly control quality metrics directly. However, this approach has its own drawbacks. If you can’t move quickly with an internal team that’s spending more time labeling the data than they are developing and sharpening your AI algorithms and forward-thinking innovations.

On the flipside, an outsourced workforce can help you move faster, but at what cost? Can you get the same level of quality with an external workforce as you can with your in-house experts? And of the varying external options, is there a formula for establishing the right team to execute the work? How do you even begin?

Predicting Quality

In truth, you can achieve the same level of quality as an in-house team with the right data labeling partner. No matter what approach you take to getting your data labeled, in-house or outsourced, the formula for quality has to rely on a strong combination of people, process, and tools.

People

A good AI project always begins with having the right people in place. For the best in data work, that means pulling together a team of individuals and leaders that have the right mix of experience, training, competency, and character to advance your most innovative business goals.

To achieve this desirable balance, it’s important to find a partner that can build an approach that encapsulates the human impact on quality. The greatest way for any provider to do that is to fuse together a rigorous vetting and selection process with an in-depth customized training program.

Vetting + Selection

As you begin speaking with vendors, ask them about their vetting process. Ideally, they offer a thorough process that incorporates skill and personality assessments, character interviews, and client-specific tasks that ensure a perfectly suited team is aligned and assigned based on your goals and tasks. These individuals should become your dedicated workforce, so great care must be taken to establish the perfect team.

If you’re new to working with an outsourcer, it’s important that you get clear on what you need and what will work well for your organization and project. A good outsourcing partner will ensure the vetting process is designed to easily identify those needs in your team.

Training

Once this premium team is assembled, bespoke training becomes vital to pulling together all the unique bits that make up your task. Training for complexity, nuance, or subjectivity are critical factors in final data quality.

As the client, your outsourcing partner should be open to you taking an active role in the preparation of training. That means providing the best in context, customization, and guidance the team will require to execute every task and minutiae. This extra effort can mean the difference between success and failure. Asking a provider how you can participate in that training and having them be open to your involvement is key.

Process

Good process ensures a better outcome. It can promise consistency and empower the workforce. But a great process also builds for agility and factors in scalability.

Working with a partner that has the flexibility to incorporate your existing processes, build new ones, or modify as you grow is mandatory for the best in data quality.

So how should a good outsourcer go about achieving the optimal process? They should start by having the right levers in place to manage and improve quality throughout the entire customer experience.

Those levers include a comprehensive onboarding program that educates, informs, and motivates the team, a productivity focus that incorporates feedback and performance review, and an agile foundation that accommodates use case changes, project growth, and evolution.

Tools

Proper tools make any job easier. They can do this by increasing productivity, streamlining a process, speeding up efficiencies, and reducing overall costs. But not every project will use the same tools.

Having the flexibility to use custom or commercial products means taking your needs into account first. A good partner will be tool agnostic and experienced on the best and most comprehensive set of tools to do the job efficiently and effectively.

A strong partner can also recommend tools that are best-in-class for annotating robust and complex tasks, unified in supporting built-in communication and collaboration, and have solid features for reporting timely and accurate metrics that encapsulate your most vital data requirements.

The race to usable data doesn’t have to feel like an ultramarathon. If you want the ability to scale and achieve greater throughput, seek a strong partner that puts quality at the forefront of every project. But make certain they have consistently approached quality through the lens of people, process, and tools that support your most innovative AI projects.

Sponsored content by CloudFactory