Toyota, Samsung, and iRobot were among the investors in Intuition Robotics, which has developed the ElliQ social robot and Q cognitive engine.

Intuition Robotics Ltd. today announced that it has raised $36 million in Series B funding. The company has been developing “digital companion” technologies using artificial intelligence to engage with older adults.

Ramat Gan, Israel-based Intuition Robotics was founded in 2016 and has offices in San Francisco and Greece. Its ElliQ robot is intended to help seniors stay mentally and physically active, as well as socially connected. The company’s “Q” cognitive AI product, which powers EllliQ, is available to third parties, starting with automakers.

“This investment will fuel the evolution of agents from utilitarian digital assistants to full-fledged digital companions that are at our side, anticipating our needs and seamlessly, proactively improving our lives by helping us achieve certain outcomes,” stated Dor Skuler, co-founder and CEO of Intuition Robotics. “Our cognitive AI technology has the potential to transform the way people and machines interact through empathetic relationships built on trust, exhibiting highly personalized and delightful experiences that amplify our customers’ brands.”

Notable investors

“Over the past year, we’ve been able to deliver on our mission of creating a machine capable of multimodal interactions with humans,” Skuler told Robotics Business Review. “We’re moving beyond utility and daily interaction to measure the quality of the relationship with users.”

SPARX Group and OurCrowd led the funding round, with participation from Toyota AI Ventures, iRobot Corp., Samsung Next, Sompo Holdings, Union Tech Ventures, Happiness Capital, Capital Point, and Bloomberg Beta. Intuition has raised a total of about $85 million to date.

“Intuition Robotics is creating disruptive technology that will inspire companies to re-imagine how machines might amplify the human experience,” said Jim Adler, founding managing partner at Toyota AI Ventures. “I’m proud to join the company’s board and partner with management on this exciting journey.”

“In the future, robots will provide a more proactive, empathetic, and personalized user experience,” said Colin Angle, CEO of iRobot. “Intuition Robotics is redefining what’s possible through cutting-edge technology and deep insights regarding how people and machines interact.”

Intuition Robotics said it will use the new funding to further develop its AI capabilities and tools. The company also plans to expand the availability of its Q digital companion beyond aging and automotive.

“In the past year, Intuition Robotics has grown from 35 people to 85 people, and most of that talent is in engineering and products,” Skuler said. The company is hiring.

ElliQ is now available. Source: Intuition Robotics

Intuition develops its digital companion

Intuition Robotics defines its digital companion as “the natural evolution of the digital assistant, replacing utilitarian voice command with a bi-directional relationship.” Unlike smart speakers, which respond only to direct commands, ElliQ is capable of understanding context and pursuing goals non-deterministically through conversation, explained Skuler.

“We’ve introduced memory cells into the digital companion, leading to a highly personalized experience,” he added. “For instance, ElliQ might say, ‘Hello,’ rather than ‘Good morning,’ and ask how you slept. That night, she might say, ‘I hope you sleep better,’ without prompting.”

Over the past year, ElliQ has spent over 10,000 days in older adults’ homes in the U.S., the largest experiment with a social robot to date, said Skuler. Most of the users were 80 to 90 years old, and they spent an average of at least 90 consecutive days with the digital companion.

“We saw a lot of interesting data,” Skuler said. “After three months, people were still using the product — a full order of magnitude over voice systems. They had an average of eight interactions per day — with at least two initiated by ElliQ — and six minutes of pure interaction, not counting content such as playing music.”

Intuition Robotics said its cognitive agent uniquely combines hierarchical reinforcement learning with state-driven policies defined by human experts. This allows it to be proactive as it studies its user and “drifts” towards a more personalized experience over time.

“Not only would people tell ElliQ that that they were leaving, but they’d also talk about their holiday plans or what’s going on in school, creating true multi-turn conversations,” he recalled. “These data points are giving us a lot of confidence, as it learns about the users’ routines.”

“If the goal is to motivate people to change their behavior such as exercise more, you have to do it in a highly personalized way with lots of content,” said Skuler. “The digital companion can be funny, share facts, encourage mindfulness, check the weather — or not — doing things differently each time.”

“It can say, ‘Hey, you should go for a run,’ but only if it’s the first interaction of the day, and it’s before 10:00 a.m.,” he said. “The agent looks at states, goals, and the probability of success and makes decisions in real time about which plan best fits the user at a given time.”

Tools to create behaviors

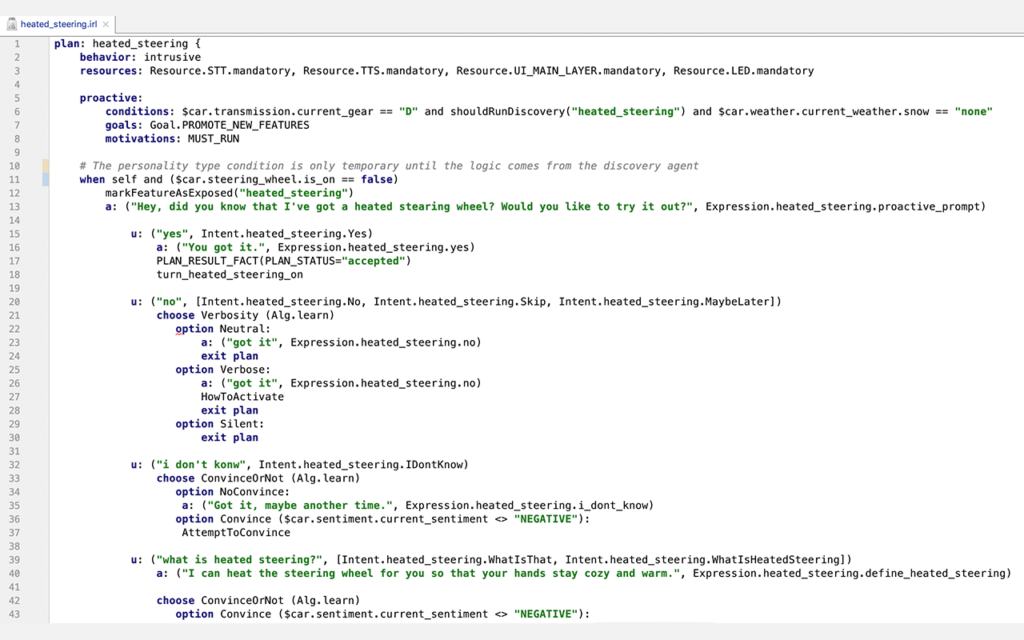

“Q is a runtime engine that can do sensor fusion, reasoning, and actuation of ElliQ’s or a car’s user interfaces,” said Skuler. Intuition Robotics is offering tools to third parties such as major automakers to enable nontechnical people to describe policies without having to program for every eventuality, he said.

“This enables them to imagine what’s possible and create a huge amount of agent behaviors,” noted Skuler. “Policies put constraints on the agent that change the motivation, but the behaviors are not linear.”

“We’re working with B2B customers so they can eventually do it themselves,” he said. “In the beginning, we’re working with customers on integration, such as recognizing the state of the sensors, the engine, or whether a user has entered the car.”

“We also provide a state dashboard, which provides a peek inside the brain of the agent,” Skuler said. “It shows the completion status of goals, memory cells, routines created, activities done already today. You can literally see that the digital companion was debating between three activities and the probabilities given. This allows you to understand the agent’s behavior and tune the context of facts.”

The Q studio tool for understanding the agent’s decision making. Source: Intuition Robotics

“When working with third parties across cultures, the interactions done across modalities need to be choreographed,” he added. “We play around with ElliQ’s expressions and lights, and we need to be able to do A/B testing with users, deploy over the air, and apply analytics. We’ve created a suite of tools and have teams working on content in English, Chinese, and Japanese.”

Digital companion to disrupt the automotive experience

While autonomous vehicles are getting a lot of investment and media attention, the future in-car experience is becoming another differentiator for technology companies and automakers.

“We’ve been working with automotive customers such as Toyota Research Institute for one and a half years now,” said Skuler. “The amount of changes in states is much higher in cars, with internal and external sensors knowing the speed, weather, charging state, infotainment, and status of multiple passengers.”

“Autonomy is the ultimate goal, but even now, you sit through a half-hour lecture at the dealership after buying a car and then forget everything. Users don’t remember how to use things like lane assist and adaptive cruise control,” he observed. “What if the car could explain it to you in a way based on your personality? You might be more of a techie or safety-driven, or you might prefer a more humorous approach or just a reminder.”

“Another use case is detecting drowsiness,” Skuler said. “Many cars can now detect it, but once you control the human-machine interaction on the multimodality side, a car could adjust the temperature or recommend stopping for coffee. This can also help the OEMs differentiate and amplify their brands, so a sports car would have a different definition than a family car or a pickup truck.”