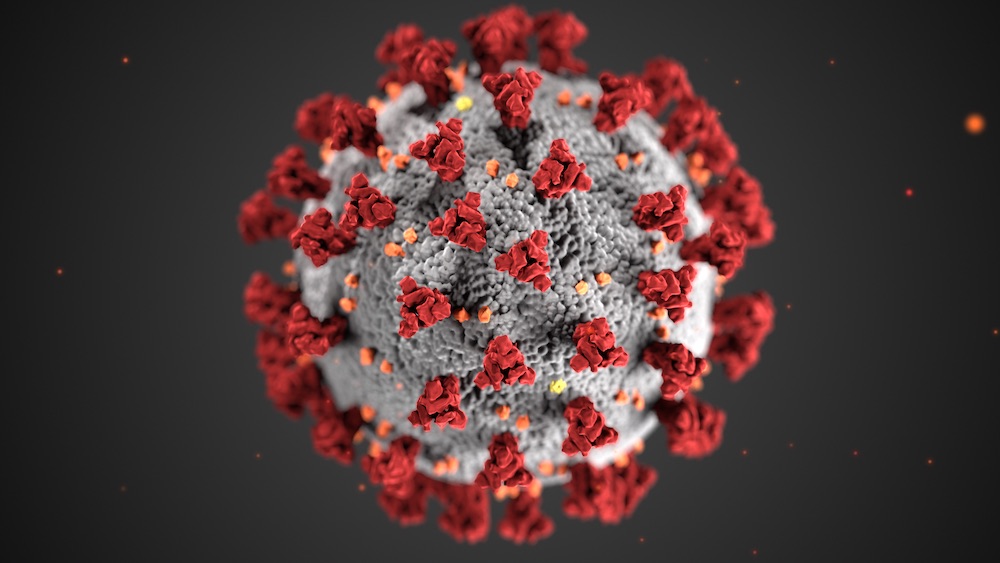

Millions of Americans have started to work from home amidst the current COVID-19 pandemic. Retailers have struggled with supply while nervous consumers are hoarding everything from toilet paper to hand soap. Across the globe, Chinese e-commerce giant JD began testing a level-4 autonomous delivery robot in Wuhan and running its automated warehouses 24 hours a day to cope with a surge in demand.

Suddenly, autonomous machines need to be better than just proof of concepts. They can no longer depend on on-site engineering support for edge cases. They must be robust enough to work independently across various real-life situations.

In some ways, the COVID-19 epidemic accelerates an automated future that’s already on its way. It has exposed problems that have long existed in the artificial intelligence (AI) venture scene: buzzwords and hype cloud people’s judgment, making it difficult to see real progress.

The industry needs to take on much-needed reforms towards real-world autonomous systems in the following three areas.

1. Rethink metrics

As more autonomous machines are deployed in the real world, conventional metrics such as speed, cycle time, or success rate can no longer represent the full picture. We need to measure the reliability of the system under uncertainties with robustness metrics such as the average number of human interventions.

We need more tools and industry standards to evaluate overall system performance across a wide range of scenarios because real life, unlike a controlled environment, is unpredictable.

If a delivery robot can reach a max speed of 4 MPH but cannot complete a single delivery without human support, the robot is not creating much value to its users.

DevOps emerged a few years ago to shorten the development cycle and continuously deliver high-quality software. In comparison to software engineering, AI or machine learning (ML) is much less mature. 87% of ML projects never go into production. However, recently we’ve started to see MLOps or AIOps appearing more and more.

This marks a crucial transition from AI/ML research to actual products that are used and tested every day. It requires a significant change in mindset to focus on quality assurance instead of state-of-the-art ML models. I’m not saying we can’t have both at the same time, but to date, we’ve seen much more emphasis on the latter.

Starsky Robotics, a once promising self-driving truck company founded in 2015, shut down in March. Founder Stefan Seltz-Axmacher said its biggest challenge was that “supervised machine learning doesn’t live up to the hype.” | Credit: Starsky Robotics

2. Redesign error handling and communication

The recent shutdown of Starsky Robotics reminds us that we are still years away from fully autonomous solutions. That doesn’t mean robotics cannot bring immediate values to humans. Even if humans need to handle edge cases 15% of the time, that still means companies can reduce significant labor and integration costs.

However, AI companies currently tend to spend much more resources on building autonomous systems and much less time thinking about error handling and seamless hand-off between machines and humans.

We need a better way of handling and communicating errors, especially for ML products because ML is more probabilistic and less transparent. Therefore, showing the confidence level of model predictions or framing your predictions as suggestions instead of decisions are ways to gain trust with users.

We need to categorize errors into different levels, design different protocols accordingly, and prioritize minimizing fatal errors that stop the system and require human intervention. If fatal errors occur and the system isn’t working anymore, can we respond quickly and troubleshoot remotely?

The most difficult part is to identify the unknown unknowns, errors that systems cannot detect. Therefore, it’s also crucial to have two-way communication and allow users to flag errors or choose to activate the previously agreed fallback plan.

3. Redefine human-machine interaction

The novel coronavirus forces companies to more rapidly adopt automation and shift to the cloud. As fewer people control a larger number of robots, do we have the right tools and technologies to pass all the relevant information to that decision-maker promptly? Are there enough sensors on each robot to provide a full picture?

Today, we rely on tactile input like computers or tablets to control robots. Are these still the best interface as the amount of information soars and response time remains short? Should we reconsider human-machine interfaces that go beyond tactile, for example, voice, VR/AR or brain-machine interface?

We also need to decide who should be in control. As machines get smarter, should we always make the final call?

For example, who should be controlling an autonomous robotaxi? The car itself? The human safety driver? Someone who monitors a fleet of robotaxis remotely? The passengers? Under what situation? Or should it be a co-decision with weighted judgment by both humans and machines? What’s the ethical implication? Can the interface support multi-step co-decision making?

Ultimately, how do we design human-centered AI to make sure autonomous machines make our lives better, not worse? How do we automate the right use cases to augment humans? How do we build a hybrid team that delivers better outcomes and allows humans and machines to learn from each other?

There are still a lot of questions that we need to answer. And the current COVID-19 pandemic is pushing us to answer them more quickly so that would-be autonomous systems can deliver on their promise. If the makers of these systems can focus on the three areas I’ve outlined above, they’ll be better positioned to reach key conclusions more quickly. And that will ensure we’re heading in the right direction.

About the Author

Bastiane Huang is a Product Manager at OSARO, a San Francisco-based startup building AI-defined robotics. She has worked for Amazon in its Alexa group and with Harvard Business Review, as well as the university’s Future of Work Initiative. She also writes about ML, robotics, and product management.

Now is the need for Autonomous vehicles than any ever, the Truck Drivers are Vulnerable to the Covid 19 and without them, the whole country would come to a stall. Hope the Regulators recognise the necessity of the Autonomous vehicles and clears out road blocks.

What we need to stop is overpayment of CEO’S and executives and paying workers a living wage. Make it possible for workers to actually cover healthcare costs, education costs for children & themselves, own a home, build a secure retirement with 20 years of work.

“Even if humans need to handle edge cases 15% of the time, that still means companies can reduce significant labor and integration costs.”

This is exactly the model we enable at Cognicept. Robots don’t have to be perfect to be very useful.

Yes, we need more reliability and operationality in logistics and delivery robots. But covid-19 should also put the emphasis on a more systemic way of thinking. Are redducing labor and integration costs the key words for companies and organizations. What about the view from the users? Let’s try to get some benefits from this exceptional period, that stopped our current systems worlwide, at a time where we needed and still need to reinvent our relationships to others, to work, to the nature, reinvent our systems. Let’s take a leap and profoundly reset our priorities individually and collectively. Then, we shall have a clearer view of how technologies, it be robotics, AI or AR/VR, aso, can help us achieve our new goals. It os scary to see performance indicators being still the sole measure we think about. I have been advocating for 10 years now that we focus our R&D and projects using technologies for a sustainable humankind. Maybe Covid-19 is giving us the opportunity to profoundly change, not just alter performance criteria.

The integration of real AI (both narrow and general AI) will become evidently more crucial. It will no longer be enough to pop champagne for the success off narrow AI. Edge cases need to be handle more human-like and the collaborative model between Human and Machine will warrant deep attention. Singapore is looking seriously into HMC (Human Machine Collaboration) and we believe this is the huge and future market. Narrow AI beat humans hand-down for various reasons, such as computing power, speed amongst others. However, the re-design of human-machine interaction will call for a higher degree of quasi-consciousness, sentience or perception, and a ‘mind’ which accumulates intelligence. Post CoVID-19 will bring great talents to achieve this higher order – AI in Robotics will pave the way forward.