Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and the University of Toronto are developing a 3D simulator that could eventually teach robots how to complete household tasks like making coffee or setting the table.

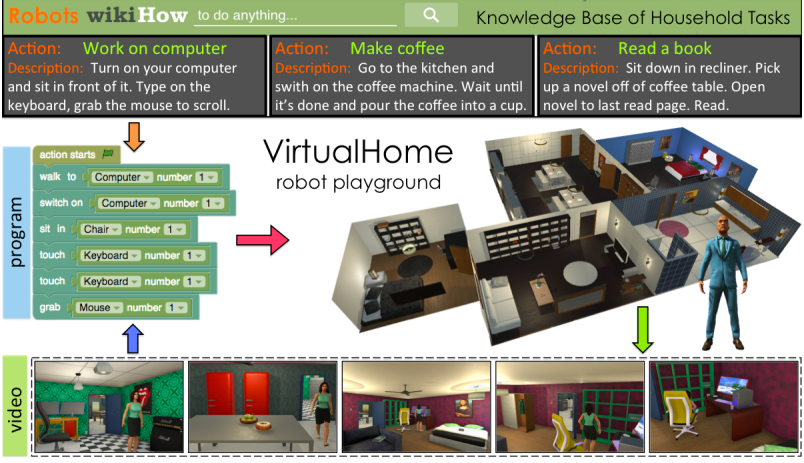

“VirtualHome” is a system that simulates detailed household tasks and has artificial “agents” execute them. Using crowdsourcing, the team tested VirtualHome on more than 3,000 programs of various activities that are further broken down into subtasks for the computer to understand.

[Paper: VirtualHome Simulating Household Activities via Programs]

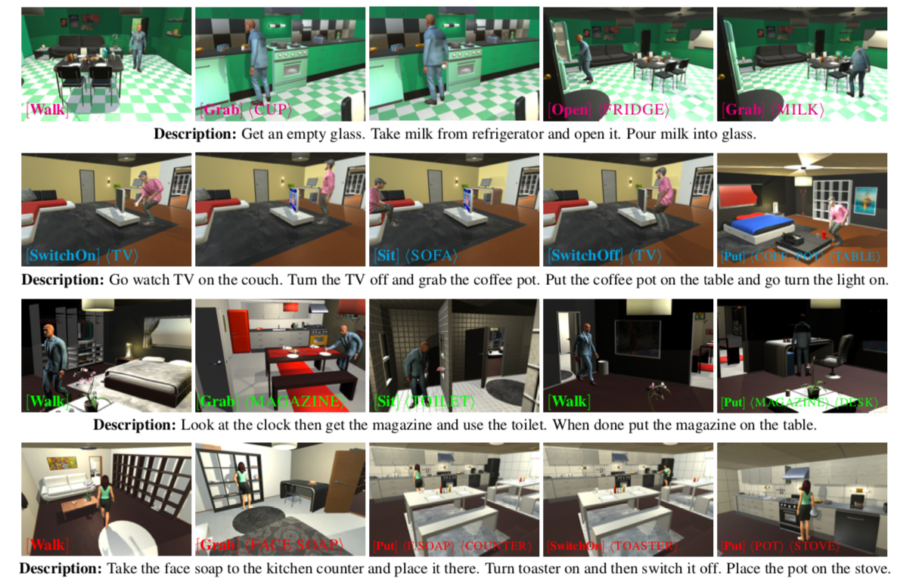

Unlike humans, robots need more explicit instructions to complete tasks as they can’t just infer and reason. A simple task like making coffee, for example, would include steps like “open the cabinet” and “grab a cup.” For another example, a task like “watch TV” might include steps like “walk to the TV”, “switch on the TV”, “walk to the sofa” and “sit on the sofa.”

VirtualHome creates a database of chores using natural language

To create detailed descriptions for each VirtualHome task, the researchers collected verbal descriptions of household activities and translated them into simple code. The team fed the programs to the VirtualHome 3D simulator to be turned into videos and executed by a virtual agent.

“Describing actions as computer programs has the advantage of providing clear and unambiguous descriptions of all the steps needed to complete a task,” said PhD student Xavier Puig, who was lead author on the paper. “These programs can instruct a robot or a virtual character, and can also be used as a representation for complex tasks with simpler actions.”

The researchers said this opens up the possibility of one day teaching robots to do such tasks. For now, however, this project essentially has created a large database of household tasks described using natural language. Amazon, which is developing a consumer robot for homes, could potentially use data like this to train their models to do more complex tasks.

Training robots by watching YouTube videos

Of the 3,000 programs, the team’s AI agent can already execute 1,000 separate sets of actions in eight different scenes, which include a living room, kitchen, dining room, bedroom, and home office.

“This line of work could facilitate true robotic personal assistants in the future,” said Qiao Wang, a research assistant in arts, media, and engineering at Arizona State University who was not involved in the research. “Instead of each task programmed by the manufacturer, the robot can learn tasks just by listening to or watching the specific person it accompanies. This allows the robot to do tasks in a personalized way, or even some day invoke an emotional connection as a result of this personalized learning process.”

In the future, the team hopes to train the robots using actual videos instead of Sims-style simulation videos, which would enable a robot to learn simply by watching a YouTube video. The team is also working on implementing a reward-learning system in which the agent gets positive feedback when it does tasks correctly.

“You can imagine a setting where robots are assisting with chores at home and can eventually anticipate personalized wants and needs, or impending action,” said Puig. “This could be especially helpful as an assistive technology for the elderly, or those who may have limited mobility.”

Tell Us What You Think!