Sense Photonics was one of the few solid-state lidar vendors at MODEX 2020. Source: Sense Photonics

ATLANTA — Among the trends spotted around the MODEX supply chain show here last week was interest in widening automation applications beyond the interiors of warehouses and factories. But before robots can load and unload trucks or safely work alongside people in more dynamic environments, they need to see more clearly. Solid-state lidar is one way to do so.

Sense Photonics Inc. was among the exhibitors at MODEX 2020 and offered observations about solid-state lidar and logistics robotics.

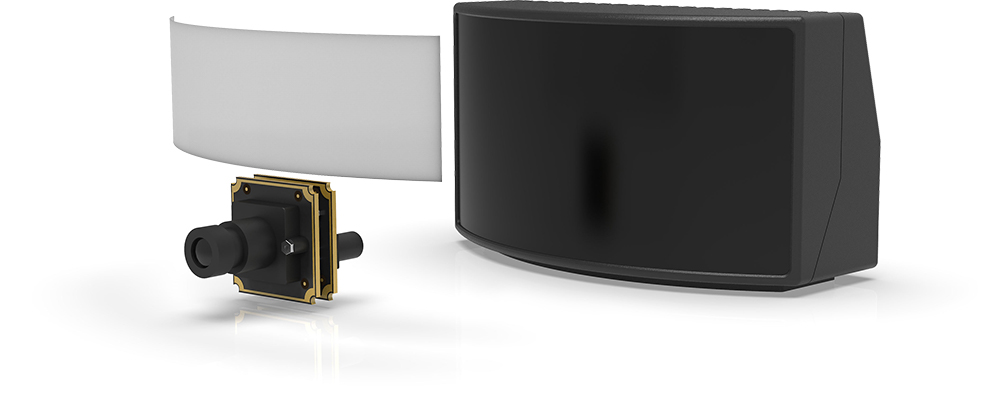

Durham, N.C.-based Sense Photonics came out of stealth and released its Solid-State Flash LiDAR sensor last fall. The 3D time-of-flight camera is intended to provide long-range sensing, can distinguish intensity, and works in sunlight.

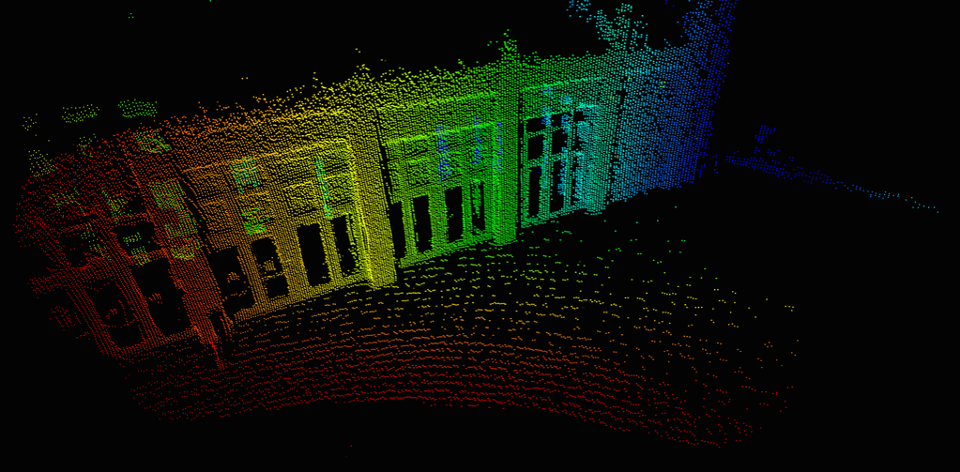

The ability to detect intensity is important when perceiving things like forks on a forklift truck, which are often black, reflective, and close to the plane of a floor. Forklifts are involved in 65,000 accidents and 85 fatalities per year, according to the Occupational Safety and Health Administration.

Developing better sensors for mobile robots

The founders of Sense Photonics previously worked together at a solar power company, where they learned the core processes for manufacturing semiconductors. That prior experience in photonics and silicon is applicable to designing laser emitters on a curved substrate for wider field of view.

“I’ve been at robotics companies for 10 years — I was at Omron Adept,” recalled Erin Bishop, director of business development at Sense Photonics. “I’ve used [Microsoft’s] Kinect cameras for unloading trucks, but all these robots need better 3D cameras.”

“Intel’s RealSense is good for prototypes and indoors, but there are a number of different attributes that matter a lot,” she told The Robot Report. “Robots are especially useful for dull, dirty, and dangerous jobs outdoors, and you need mounted or mobile cameras that can withstand exposure to the elements.”

Bishop noted the challenge of seeing multiple rows of pallets or forklifts at 50m (164 ft.) indoors. “Forklift forks are usually caught in the noise from the floor,” she said. “In addition, for pallet detection, the conversation is starting about 3D camera data output and using training sets that are labeled RGB-D.”

“One benefit of a custom emitter, rather than MEMS [micro electro mechanical system] or purchasing lasers from a third party, is that we can control the laser output as necessary. For example, interference mitigation is very important for installs of more than one 3D camera or lidar, and our emitter has interference mitigation built in,” explained Bishop, who spoke at RoboBusiness 2019 and CES 2020. “Also, we use a 940nm wavelength, which works in bright sunlight.”

“With high dynamic range or HDR, we can accurately range targets with only 10% reflectivity in 100% sunlight,” she said. “We’re emitting a powerful amount of photons, cutting through ambient light, and we can get a return from a dull black object like a tire or a mixed-case pallet using HDR.”

“By separating the laser from the receiver, it’s easier to integrate and make our system disappear into last-mile delivery robots and consumer automobile designs,” she claimed. “Sense One and Osprey offer indirect time-of-flight [ITOF] sensing.”

A modular lidar architecture allows for separation of the emitter from the receiver for use in vehicles. Source: Sense Photonics

From solid-state lidar to ‘cameras’

“The technology is mature, but it’s not 100% versatile,” Bishop observed. “The whole lidar industry is moving from ‘sensors‘ to ‘cameras‘ — ‘lidar’ is a bad word, and robotics companies aren’t interested. They prefer ‘long-range 3D camera.'”

A lidar intensity image of a forklift. Source: Sense Photonics

“The detector collects 100,000 pixels per frame on a CMOS ITOF with intensity data. RGB camera fusion is really easy, and field-of-view overlay is promising,” she said. “The entire computer-vision industry can attach depth values at longer ranges to train machine learning.”

“When a human driver sees a dog, that may be efficient, but machines need to know what’s in the scene,” said Bishop. “When you generate an RGB image and have associated depth values across the field of view, computer-vision models become more robust. Our solid-state lidar provides more reliable uniform distance values at long range when synched with other sensors.”

Lidar plus RGB data for depth perception. Source: Sense Photonics

“The software stack then gets more reliable for annotation, and if a distance-imaging chip was in every serious camera for security, monitoring a street, or in a car or robot, it could help annotation for machine learning,” she added. Better data would also help piece-picking and mobile manipulation robots.

“Sense Photonics’ software uses peer-to-peer time sync for sensor fusion and robotic motion planning,” Bishop said. “With rotating lidars, you need to write a lot of code to understand images, as the robot and sensor are moving at the same time. Companies have told us they want low latency and lower power from solid-state systems.”

Solid-state lidar applications

More accurate and rugged solid-state lidar can be useful for warehouse, logistics, and other robotics use cases, especially in truck yards, Bishop said. At MODEX, Sense Photonics demonstrated industrial applications, including security cameras, video annotation, forklift collision avoidance, and supplemental obstacle avoidance.

By combining simultaneous localization and mapping (SLAM) with lidar data, fleet management systems could know about bottlenecks and manage all assets in a warehouse, Bishop said. “They should be warehouse operations systems,” she said. “The big retailers and consultants don’t know the difference between old-school and new-school robotics. Customers don’t know whom to believe when it comes to capabilities.”

“With high-accuracy mode, our 3D cameras can see the cases on a mixed-case pallet 3 meters away with 5-millimeter accuracy,” she said. “Our HDR mode helps image different colors and reflectivity of the boxes on the pallet as well.”

Source: Sense Photonics

“Most mobile robotics people want a wide field of view at 15 to 20 meters,” Bishop said. “We’ll have a 95-by-75-degree, so you can do 180 degrees with two units. It and our Osprey product for automotive will be available soon.”

“Sense offers three different fields of view for a 40-meter range outdoors,” she added. “The long range helps a lot with facilities that want to install 3D cameras and have a lot of areas to cover. The install cost of SenseOne is more economical than installing a larger number of consumer-grade depth cameras.”

All you need to do is install cameras to the wire, mount them, and get their security certificates and IP address. For 50 meters indoors, you need only one or two units.”

“Because of its usefulness in sunlight, one company wants to put Sense Photonics’ LiDAR in an agricultural project,” said Bishop. The Sense Solid-State Flash LiDAR is available for preorders now.

“Our intention is to build reasonably priced cameras for integration on consumer-grade advanced driver-assist systems, not expensive ones for experimental use,” she said. “Automotive manufacturers are figuring out which sensors they need and don’t need. They’re now optimizing for only the sensors they need and want to make them invisible.”

Tell Us What You Think!