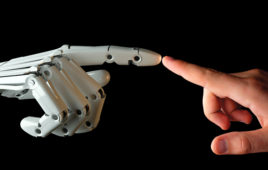

In the field of Robotics, where is the line between between remote control, software control and autonomous control? (No, I’m not going after the consciousness thing, it’s way too complicated)

Part of the problem may have to do with our use of the word “intelligence”. We talk about the increasing “intelligence” of processors and particularly about the cost of “intelligent” control dropping to the point where it is suddenly economical to put a microcontroller together with a motor in order to achieve new levels of performance in either energy management or some other critical parameter. Which opens new performance capability in robot design.

Increasingly, industrial robotics involve the use of vision systems to acquire information about the location and orientation of parts so that the robot system can interface smoothly to the “real world”. If any of you have been to an industrial trade show and witnessed the Delta Robots making cookies, it is a very impressive sight to behold. Incredible throughput and accuracy. And that’s what it’s all about in industry. Higher productivity, improved product quality.

But where is the line between remote control and automatic control? A remote manipulator for working in the nuclear industry, which was the big application that drove early robots, is a remote servo loop operating a series of servo motors and controls and powering mechanical systems, in order to do work that is dangerous to humans from a safe distance. The DaVinci medical robot is a phenomenally improved version of the same thing. A remote controlled robot, guided by direct haptic inputs from a surgeon, and with very sophistical tactile feedbacks, whose end effectors operate a variety of surgical instruments and actually increase the precision and speed with which doctors may perform certain procedures.

Is this a robot? Sure!

When we watch welding and painting robots making cars, we are watching decades of technology development in action. There has been significant effort to improve the actuator hardware, and probably many man-years of software development to improve our description of the task and its safety and performance constraints in order to create not only reliable, but increasingly efficient machines to do the tasks that humans cannot compete with for productivity. These are very sophisticated automatic applications, but certainly not autonomous. The boundaries of the application and the programming for it are very finite. Again, its about repetition, speed and accuracy.

And, yes, we call these robots, too.

But increasingly, there is discussion about the next frontier of robotics. Where are the next big apps coming from? Most of the big robotic companies in Japan and Europe are talking about personal service robots. You can let your imagination run wild here. Anything is possible. Certainly the service robot for NASA is interesting because it, again, follows the concept of doing tasks where it is difficult for humans to operate.

Is a Jeep that can be programmed to find a path and drive from one place to another autonomously a robot? Yes, but we may be pushing the boundaries here just a bit. These applications fall into the realm of Artificial Intelligence. The programming and software languages for which were just being described for the first time about 30 years ago. And at this point we are forced into the debate about what is intelligence. In addition, are these systems which are capable of “learning” and what is learning exactly? And more importantly, as all good science fiction movie watchers will ask, can a machine exceed it’s programming? (See? I didn’t even start on consciousness yet)

These are all serious considerations for the Future of Robotics which I will pick up further next week.

Tell Us What You Think!