|

Listen to this article

|

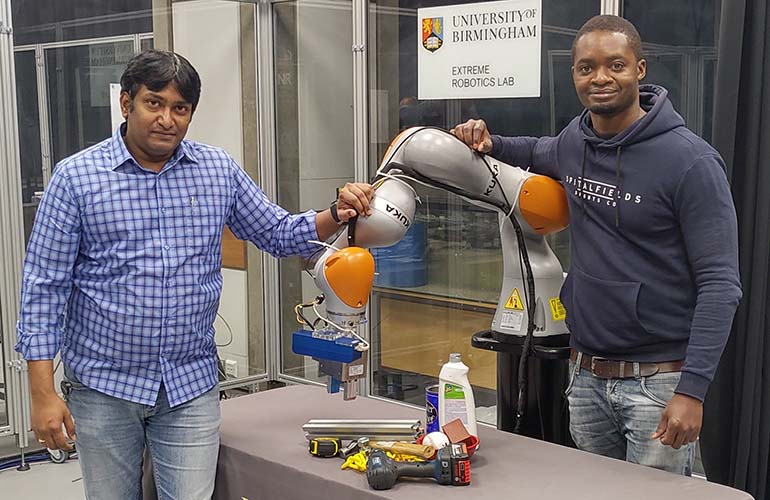

Dr Naresh Marturi, senior research scientist in robotics (left) and Maxime Adjigble, robotics research engineer (right) were part of a team that developed a system to better handle nuclear waste.

There’s over 85,000 metric tons of spent nuclear fuel from commercial nuclear power plants, and 90 million gallons of waste from government weapons programs in the U.S. today, according to the Government Accountability Office.

That number is rapidly growing. Every year, we add 2,000 metric tons of spent nuclear fuel. Disposing and handling nuclear waste is a dangerous task that requires precision and accuracy. Researchers from the National Centre for Nuclear Robotics led by the Extreme Robotics Lab at the University of Birmingham in the UK are finding ways to help humans and robots work together to get the job done.

The researchers have developed a system using a standard industrial robot that uses a parallel jaw gripper to handle objects and an Ensenso N35 3D cameras to see the world around it. The team’s system involves allowing humans to make more complex decisions that AI isn’t equipped to do, while the robot determines how to best perform the tasks. The team uses three kinds of shared control.

The first is semi-autonomy, where a human makes high level decisions, while the robot plans and executes them. The second is variable autonomy, where a human can decide to switch between autonomous movements and direct joystick controlled movements. The third is shared control, where the human tele-operates some aspects of a task, like moving the robot arm towards an object, while the AI decides how to orient the gripper to best pick up that object.

The robot is equipped with Ensenso’s 3D camera, which gives it spacial vision, similar to human vision. Ensenso’s cameras work by having two cameras view objects from slightly different positions. They capture images that are similar in content but show differences in the position of objects.

Ensenso’s software combines these two images to create a point cloud model of the object. This method of viewing the world helps the robot to be more precise in its movements.

“The scene cloud is used by our system to automatically generate several stable gripping positions. Since the point clouds captured by the 3D camera are high-resolution and dense, it is possible to generate very precise gripping positions for each object in the scene,” said Dr. Naresh Marturi, senior research scientist at the National Centre for Nuclear Robotics. “Based on this, our ‘hypothesis ranking algorithm’ determines the next object to pick up, based on the robot’s current position.”

Researchers at the lab are currently developing an extension of the system that would be compatible with a multi-fingered hand instead of a jaw gripper. They’re also working on full autonomous gripping methods, where the robot is controlled by an AI.

Tell Us What You Think!