By Wade Trappe | Professor of electrical and computer engineering at Rutgers University • IEEE Fellow, member of the IEEE Signal Processing Society

By Wade Trappe | Professor of electrical and computer engineering at Rutgers University • IEEE Fellow, member of the IEEE Signal Processing Society

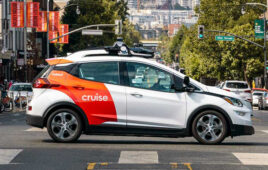

We spend a considerable amount of time driving—to work and home, for recreation and travel. Envisioning a completely autonomous world, we’ll be able to rent a vehicle pre-programmed to take us to a specified destination. Drivers will be able to disengage to read the newspaper while the car carries out their commute and seamlessly coordinates to ensure the safety of its passengers.

This promise of freedom is very enticing, but society shouldn’t rush headlong into adopting automated vehicles. Transportation systems are complex with many interacting pieces, and recent news stories have emphasized the challenges facing self-driving cars. Tragic Tesla crashes in China and the U.S. highlight the serious life-and-death consequences associated with malfunctions or miscalculations that can occur with autonomous vehicles. Meanwhile, other stories gave us insight into what can go wrong should these systems and their data come in the cross-hairs of cybercriminals, and a recent study found that people are excited about the possibility of “bullying” self-driving cars.

So how can these dangerous situations be prevented? Officially, Tesla’s Autopilot was meant to assist and not replace the driver. We must slow our rush to “remove the human” from the equation. Humans are still essential to driving and should remain so until the technology matures. This does not mean we should slow innovation, but work harder to provide even more innovation that can make its way into vehicles. Here are four promising ways signal processing and data analytics can have an impact on the challenges surrounding automated vehicles.

Properly assess and utilize data for safety decisions

Cars currently contain roughly 100 sensors and future automobiles will likely be deployed with significantly more, including accelerometers (for impact detection and motion measurements), pressure sensors (for air intake control, monitoring fuel consumption, tire conditions), temperature sensors (to monitor and control engine conditions, fuel temperature, passenger compartment temperature), and phase sensors (camshaft/crankshaft phase sensors for motor control, and gear shaft speed for transmission control). Angular rate sensors monitor the roll, pitch and yaw of a vehicle, which informs dynamic control systems, automatic distance control and navigation systems. Angular and position sensors monitor the position of gear levers, steering wheel angle and mirror positioning. Radar, lidar and camera sensors are used to facilitate new applications, such as blind spot monitoring, lane departure warning and automated driving.

These sensors provide the abundance of data that can serve as corroborating evidence to fix malfunctions, back-solve and determine a mistake is about to be made from using a single sensor type alone, and to correct false data injected by those trying to hack our vehicles. When properly utilized, this wealth of data is the avenue to safety and robustness.

Merge multiple types of imaging sensors for fast object recognition

It’s evident the Tesla crash videos recorded by Autopilot weren’t under ideal lighting conditions. Background objects blended into vehicles that needed to be recognized, making it difficult for any computer to process correctly. This was amplified by the short time allowed to “lock on” given the speed of the vehicle and the imminent crash. Multiple sensor types used in conjunction could have helped. Radar or lidar would not have been susceptible to the same difficulties the camera-based system likely encountered.

Tesla has since re-evaluated its strategy for Autopilot, including the possibility of using radar in place of the camera, and two things are clear: the choice of a radar system is meant to avoid the environmental hurdles that arise with visual-based systems, and Tesla has collected a large amount of radar data that serves as the basis of its new Autopilot system.

Though likely an improvement, switching to a single type of sensor isn’t likely to solve all the problems that will arise in automating vehicles. In fact, while radar can cope with lighting-based challenges, numerous studies suggest lidar systems are superior in terms of tracking accuracy. While lidar systems suffer degradation in conditions with fog, cameras offer the ability to recognize finer details associated with objects (such as license plate information). In fact, cameras support accurate assessment of the visibility distance (notably fog), which could be used to inform the driver that vehicular assistance services aren’t available or experiencing degraded quality of service because fog is affecting the visibility of road lanes and other vehicles. Data fusion and extracting hidden correlation between sensor types is at the heart of modern signal processing. Merging radar, lidar and visual systems into fast and robust object recognition and tracking algorithms is an exciting opportunity where signal processors can contribute.

Share data between vehicles to correct miscalculations or other errors

When considering future vehicular applications, we should recognize other sensor types will be available and can provide valuable knowledge, like weather conditions, road friction coefficients or road slopes. Road slope information is useful for coordinating braking amongst several vehicles since slope is related to the potential for a vehicle to accelerate or decelerate.

Data sharing between vehicles and cloud-based computing services open up many other possibilities to improve vehicle safety.

Data shared between vehicles will allow signal processing algorithms running on each vehicle to gather the conditions that may be experienced by other nearby vehicles.

Further, data measured by the multitude of sensors, whether from within a single vehicle or across several, can be used to correct malfunctioning or poorly calibrated sensors. Currently, vehicular sensors are recalibrated by bringing a vehicle to a certified garage to update or replace the sensor. By using the distributed nature of the vehicular setting – in which there are numerous vehicles frequently making data measurements correlated across many dimensions – it becomes possible to report this data to cloud servers that would perform large-scale data analytics to accurately identify the corrections needed.

Understand human driving behavior through signal processing advancements

Going beyond the technical aspects, what is forgotten is that transportation also serves as a complex social fabric by which we interact with each other. This merging of “cyberphysical” with social makes automating vehicles an ultra-hard problem to tackle. We observe cues from vehicles while we drive that suggest we should drive more cautiously (e.g., a pedestrian looking at their smartphone while walking toward an intersection) or even avoid certain driving scenarios (e.g., an overzealous driver swerving through lanes).

While it’s unreasonable to expect that self-driving cars could make the same social observations that we make as humans, what we can expect is that technology will assist us in being as aware and informed as possible. Already there have been advancements made by the signal processing community to estimate driver distraction using in-vehicle sensors and cue the driver to focus on the road. However, there are many other opportunities for signal processing engineers to analyze human behavior data associated with driving, which will be essential for improving driver and pedestrian safety.

The future of vehicular systems is data and sensor-driven. Vehicles will become increasingly networked and outfitted with sensors, and share their data with a variety of in-vehicle and cloud-based computing services. The societal benefits associated with improved vehicular systems range from energy efficiency resulting from swarm driving to the potential for saving many lives should the technology mature. While this future is exciting, engineers, researchers and technologists must quickly act to develop the new signal and information processing innovations required to make future vehicular systems safe.

Wade Trappe is an IEEE Fellow, member of the IEEE Signal Processing Society, and Professor of electrical and computer engineering at Rutgers University. Dr. Trappe received his B.A. in mathematics from The University of Texas at Austin and his Ph.D. in applied mathematics and scientific computing from the University of Maryland.

Tell Us What You Think!