Shelley, Stanford’s autonomous Audi TTS, performs at Thunderhill Raceway Park. (Credit: Kurt Hickman)

Researchers at Stanford University have developed a new way of controlling autonomous cars that integrates prior driving experiences – a system that will help the cars perform more safely in extreme and unknown circumstances. Tested at the limits of friction on a racetrack using Niki, Stanford’s autonomous Volkswagen GTI, and Shelley, Stanford’s autonomous Audi TTS, the system performed about as well as an existing autonomous control system and an experienced racecar driver.

“Our work is motivated by safety, and we want autonomous vehicles to work in many scenarios, from normal driving on high-friction asphalt to fast, low-friction driving in ice and snow,” said Nathan Spielberg, a graduate student in mechanical engineering at Stanford and lead author of the paper about this research, published March 27 in Science Robotics. “We want our algorithms to be as good as the best skilled drivers—and, hopefully, better.”

While current autonomous cars might rely on in-the-moment evaluations of their environment, the control system these researchers designed incorporates data from recent maneuvers and past driving experiences – including trips Niki took around an icy test track near the Arctic Circle. Its ability to learn from the past could prove particularly powerful, given the abundance of autonomous car data researchers are producing in the process of developing these vehicles.

Physics and learning with a neural network

Control systems for autonomous cars need access to information about the available road-tire friction. This information dictates the limits of how hard the car can brake, accelerate and steer in order to stay on the road in critical emergency scenarios. If engineers want to safely push an autonomous car to its limits, such as having it plan an emergency maneuver on ice, they have to provide it with details, like the road-tire friction, in advance. This is difficult in the real world where friction is variable and often is difficult to predict.

To develop a more flexible, responsive control system, the researchers built a neural network that integrates data from past driving experiences at Thunderhill Raceway in Willows, California, and a winter test facility with foundational knowledge provided by 200,000 physics-based trajectories.

This video above shows the neural network controller implemented on an automated autonomous Volkswagen GTI tested at the limits of handling (the ability of a vehicle to maneuver a track or road without skidding out of control) at Thunderhill Raceway.

“With the techniques available today, you often have to choose between data-driven methods and approaches grounded in fundamental physics,” said J. Christian Gerdes, professor of mechanical engineering and senior author of the paper. “We think the path forward is to blend these approaches in order to harness their individual strengths. Physics can provide insight into structuring and validating neural network models that, in turn, can leverage massive amounts of data.”

The group ran comparison tests for their new system at Thunderhill Raceway. First, Shelley sped around controlled by the physics-based autonomous system, pre-loaded with set information about the course and conditions. When compared on the same course during 10 consecutive trials, Shelley and a skilled amateur driver generated comparable lap times. Then, the researchers loaded Niki with their new neural network system. The car performed similarly running both the learned and physics-based systems, even though the neural network lacked explicit information about road friction.

In simulated tests, the neural network system outperformed the physics-based system in both high-friction and low-friction scenarios. It did particularly well in scenarios that mixed those two conditions.

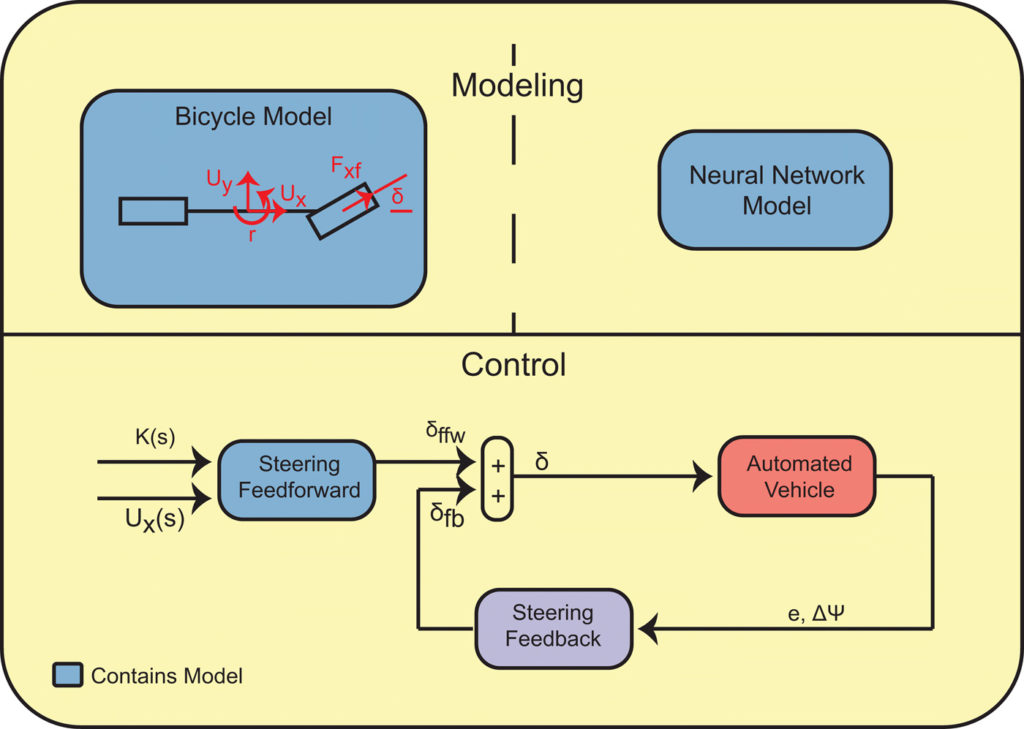

Simple feedforward-feedback control structure used for path tracking on an automated vehicle. (Credit: Stanford University)

An abundance of data

The results were encouraging, but the researchers stress that their neural network system does not perform well in conditions outside the ones it has experienced. They say as autonomous cars generate additional data to train their network, the cars should be able to handle a wider range of conditions.

“With so many self-driving cars on the roads and in development, there is an abundance of data being generated from all kinds of driving scenarios,” Spielberg said. “We wanted to build a neural network because there should be some way to make use of that data. If we can develop vehicles that have seen thousands of times more interactions than we have, we can hopefully make them safer.”

Editor’s Note: This article was republished from Stanford University.

Tell Us What You Think!