|

Listen to this article

|

NVIDIA Corp.’s research teams have been working for several years to apply graphical processing unit or GPU technology to accelerate reinforcement learning. The Santa Clara, Calif.-based company last week announced the preview release of Isaac Gym, its new physics simulation environment for artificial intelligence and robotics research.

Reinforcement learning (RL) is of the most promising research areas in machine learning, and it has demonstrated great potential for solving complex problems, said NVIDIA. RL-based systems have achieved superhuman performance in challenging tasks, ranging from classic strategy games such as Go and Chess to real-time computer games like StarCraft and DOTA.

In addition, reinforcement learning approaches also hold promise for robotics applications, such as solving a Rubik’s Cube or learning locomotion by imitating animals. NVIDIA claimed that training for RL is now more accessible because tasks that once required thousands of CPU (central processing unit) cores can now instead be trained using a single GPU with Isaac Gym.

An RL supercomputer with Isaac Gym, NVIDIA GPUs

Until now, most robotics researchers were forced to use clusters of CPU cores for the physically accurate simulations needed to train RL algorithms. In one of the more well-known projects, the OpenAI team used almost 30,000 CPU cores (consisting of 920 computers with 32 cores each) to train its robot to solve a Rubik’s Cube.

In a similar task, Learning Dexterous In-Hand Manipulation, OpenAI used a cluster of 384 systems with 6,144 CPU cores and eight Volta V100 GPUs. It required close to 30 hours of training to achieve its best results. This in-hand cube object orientation is a challenging task for dexterous manipulation, with complex physics and dynamics, many contacts, and a high-dimensional continuous control space.

Isaac Gym includes an example of this cube manipulation task for researchers to recreate the OpenAI experiment. The example supports training both recurrent and feed-forward neural networks, as well as domain randomization of physics properties that help with simulation-to-reality transfer. With Isaac Gym, researchers can achieve the same level of success as OpenAI’s supercomputer — on a single A100 GPU — in about 10 hours, said NVIDIA.

End-to-end GPU RL

Isaac Gym achieves these results by leveraging NVIDIA’s PhysX GPU-accelerated simulation engine, allowing it to gather the experience data required for robotics RL.

In addition to fast physics simulations, Isaac Gym also enables observation and reward calculations to take place on the GPU, thereby avoiding significant performance bottlenecks, claimed NVIDIA. In particular, costly data transfers between the GPU and the CPU are eliminated.

When implemented this way, Isaac Gym enables a complete end-to-end GPU RL pipeline, said the company.

Isaac Gym provides API

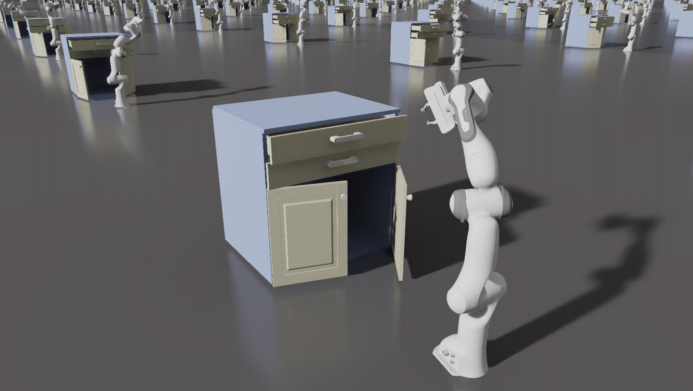

Isaac Gym provides a basic application programming interface (API) for creating and populating a scene with robots and objects, supporting loading data from URDF and MJCF file formats. Each environment is duplicated as many times as needed, and it can be simulated simultaneously without interaction with other environments.

Isaac Gym provides a PyTorch tensor-based API to access the results of physics simulation work, allowing RL observation and reward calculations to be built using the PyTorch JIT runtime system, which dynamically compiles the python code that does these calculations into CUDA code, running on the GPU.

Observation tensors can be used as inputs to a policy inference network, and the resulting action tensors can be directly fed back into the physics system. Rollouts of observation, reward, and action buffers can stay on the GPU for the entire learning process, eliminating the need to read data back from the CPU, said NVIDIA.

NVIDIA said this setup permits tens of thousands of simultaneous environments on a single GPU, enabling researchers to easily run experiments that previously required an entire data center locally on their desktops.

Source: NVIDIA

Isaac Gym also includes a basic Proximal Policy Optimization (PPO) implementation and a straightforward RL task system, but users may substitute alternative task systems or RL algorithms as desired. Also, while the included examples use PyTorch, users should also be able to integrate with TensorFlow based RL systems with some further customization.

NVIDIA listed the following additional features of Isaac Gym:

- Support for a variety of environment sensors – position, velocity, force, torque, etc.

- Runtime domain randomization of physics parameters

- Jacobian/inverse kinematics support

The company said its research team has been applying Isaac Gym to a wide variety of projects, available on its blog.

Getting started with NVIDIA Isaac Gym

NVIDIA made its Isaac software developer’s kit (SDK) available last year. It recommended that researchers or academics interested in reinforcement learning for robotics applications download and try Isaac Gym.

The core functionality of Isaac Gym will be made available as part of the NVIDIA Omniverse Platform and NVIDIA’s Isaac Sim, a robotics simulation platform built on Omniverse. Until then, NVIDIA said it is making this standalone preview release available to researchers and academics to show the possibilities of end-to-end GPU-based RL and help accelerate their work.

Tell Us What You Think!