Our recent robotics trends webinar included three great speakers. Here’s the presentation from Gary McMurray. He is a Principle Research Engineer and Division Chief at the Georgia Tech Research Institute Food Processing Technology Division. McMurray’s research has focused on the development of robotic technologies and solutions for the manufacturing and agro-business communities. To watch the webinar on demand, simply visit: http://www.designworldonline.com/innovative-trends-in-robotics/

I work at Georgia Tech and I’m going to be talking about some of the robotics work we do. Now principally, I decided to focus on vision guided robotics because this has been a topic which has been of great interest in the research world for several decades but I think it’s finally starting to make its way out into becoming more practical and hopefully will be getting into the real world shortly.

I work at Georgia Tech and I’m going to be talking about some of the robotics work we do. Now principally, I decided to focus on vision guided robotics because this has been a topic which has been of great interest in the research world for several decades but I think it’s finally starting to make its way out into becoming more practical and hopefully will be getting into the real world shortly.

The first thing I wanted to show was this video on the left. This is traditionally how robotics works in the industrial world, where we have hard stops, we have a very structured environment and the robot is able to function because things are very precisely placed. On the right is just the mock up.

It’s not a real robot, for those of you who are thinking of buying one, but this is what we would like a robot to do, be able to work in an unstructured environment. To be able to work in our house, to be able to pick up things refill things, just be able to move around and be able to accomplish all the different tasks that we do. In this case, it’s cleaning a house, with lots of kids’ toys and everything. That’s a really, really hard problem for a robot.

Really, what we’re trying to emphasize is the shift from these very simple pick and place operations to having the robots work in these unstructured environments. To be able to do this, we really need to have this robust perception or this ability to be able to see very clearly where things are, recognize what things are and then be able to interact with the objects.

I wanted to show just … There’s really two different approaches that we have to this. The first approach that I’m going to talk about is what we call more of a Model Based Approach and by this I mean we assume that we have a CAD model of the object that we’re picking up. This is the process of how this would work is you would first of all take an image.

In this case, we’re taking image and we’re going to pick up that book which is sitting on the table, the open CV book. You would take a picture. Then you would do some identification of some of the key points on the target. Then from this, you could look at it from multiple views and this allows you to be able to begin to estimate the precision and orientation of the object. Once you have this, you can begin to match the key points that you’ve identified from the different images that you take. This is what allows you to build that global estimation of the position.

Once you have this idea of where the things, where the object is located, you can now compare that to the CAD model. Once you start to compare it to the CAD model, you now have the exact position of not only the key points that you identify but all of the points on the object, okay, so you know exactly where those objects are located. If you’re interested in maybe manipulating just figure edge of the object, you now know exactly where that edge of the object is.

It also allows you to do inspection task of quality control task and things like this at the same time. Because you have that CAD model, you go back to your original image. You can begin to identify the edges and you can begin to calculate errors and things like this and this allows you to build up the full model of the position. This now allows you to manipulate it.

I’ll show you this image as well, because this shows it can actually work in tracking transparent objects as well. It’s not just these traditional hard dry fixed objects that you would think of in a traditional manufacturing setting. We’re also beginning to work on these transparent objects for things which are really much, much harder to see and track. In this case, you can actually us tracking location of a wine glass in and almost real time as the person moves it around.

Again, I’m just trying to show that these things can happen in robustly and in somewhat a real environment. How would you use his type of technology? I’m going to show just two videos on some work that we’ve done with a couple of customers. On the right hand side, it’s working what we call a fixtureless environment where it’s actually simulating a drilling operation.

Traditionally, when you do a drilling operation you have pretty big jigs and fixtures. We force parts to be very precise locations then the robot would come in or a manual person would come in and drill the holes. In this we’re actually doing it without all that. This is strictly vision based system. Because we can recognize the object, we can match it to the CAD model. We can very precisely control where we do the drilling.

The left hand side is actually doing an assembly operation for a car door where it’s taking a component and overlaying it on the door. Again, what we’re trying to show here is it’s really a fairly complicated environment. Looking at that door there’s thousands of different features that you can track but still being able to do these things in pretty much real time.

This type of flexibility is starting to come out and we think it will have a really big impact on manufacturing because you can start to move away from a lot of the capital investment you need in infrastructure, to go and do these various task.

Another approach that we have to this is what we call Uncalibrated Visual Servoing. It looks somewhat complicated here so in this case, we have a robot that we actually do not have a very precise model for and also our vision system is not calibrated. What that means is you do not know precisely where the cameras are in the workspace. As a matter of fact, they can actually be moving. They can be located on the robot. They can actually be located on a different robot that’s moving, so they can have its own separate function.

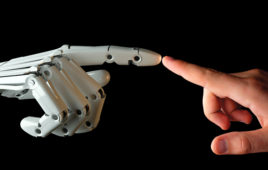

The point of this is we’re actually trying to mimic very closely the human hand/eye coordination and that’s a really, really hard thing to do. Because unlike in the previous example where we had the CAD model, we actually work out exactly in a Cartesian space that the book is located at very specific XYZ and rotation angles.

If I were to ask you to pick up a cup or something located in front of you, I don’t tell you the exact coordinates of the cup, you simply move your hand and you think, “Move left, move right, move further away. Move up, down. Okay, grab it,” and move on. We’re really trying to mimic more what the human does.

I have a couple of videos here. The two videos on the left, I admit, are extremely crude and it’s really just using a toy robot, but it’s actually demonstrating a really key concept. On the left hand side, the top video that I’m going to play first is a robot with a camera mounted on the robot and it’s trying to do a very simple task of threading a needle, taking a piece of thread to a needle.

Now as we as humans, we know how to do this. In other words, no human would take the needle and the thread, hold their arms out as far away from their body as possible and then try to thread that. No, what the human does is you usually put your elbows on the table.

On the bottom video, we’re actually looking at it from the outside and what you see is every time the robot moves, the robot is vibrating like crazy. This is not a precise robot at all. As a matter fact, it’s accuracy is far greater than the diameter of the eye of the needle, but with this type of control, we’re actually going to do something which is better than what the robot could do by itself, so it’s really an important ability.

Now I’m going to show a video here on the right of something looks a little bit better. This is some agricultural work that we do and in this case, we’re trying to grasp a leaf. In this case, you still see the pattern on the leaf, because we’re not worried with the image processing at this point. The robot searches for the leaf and then once it finds the leaf, it goes into this Visual Servoing algorithm, where it very smoothly and effortlessly moves to grasp the leaf, grasp it and then puts it into a bin for storage.

What we’re just trying to show out of this is, well, a very simple example on the left, from the right hand side, you see moving very nicely, very smoothly and all this is without any prior knowledge. There is no knowledge of where the leaf is. I have no knowledge of exactly where the camera’s located. If the camera gets bumped or moved. It’s not a problem, the algorithm is able to adjust. I think that that’s a pretty interesting contribution.

This ability of being able to guide robots using vision is something which is really coming along very nicely. Right now, about 95% of the industrial robots that are sold have absolutely no vision associated with them.

That’s perfectly understandable considering the complexity and difficulty in automating along with the current task, but with this new technology, one based in the CAD model and one based purely upon doing this hand/eye coordination, I think really is going to start revolutionizing some of the manufacturing. Because it will reduce the capital investment required to be able to automate these task and it really will make things much easier to implement.

It’s a savings on the upfront cost, but there’s also going to be a savings on the software development side because these algorithms will not require the PhD in computer science or robotics to be able to run. It will be able to run much more out of the box and require very little tuning, going forward, something that will be very interesting going forward.

Tell Us What You Think!