Many of the world’s great technologies realize their full potential only 50-100 years after invention. Consider the printing press. During the first century after the Gutenberg press was invented, books were printed in impressive quantities but lacked their current level of utility. Why? It was simply because the pages lacked consistent numbers.

It was only after slowly innovating a way to reference and index the information in books that the already-invented press could achieve its latent technological potential. The innovation latency here was about 100 years. By comparison, moving from the global internet through to web crawlers and page rank had a latency closer to 10 years.

Two more examples: The steam engine’s best use was not pumping water with low-pressure vertical cylinders, but – 100 years later – pulling trains with high-pressure horizontal cylinders. The liquid-fueled rocket engine was ready for a crew to trust on Apollo 7, but it’s only now – 60 years later – that re-using an engine 10 times is transforming access to space. In both of these cases, technologists identified what was missing, what was holding an invention back and then took it all the way.

The dictionary definition of ‘latent’ is as follows: the state of existing but not yet being developed or manifest. Today’s best example of this latency is industrial automation, an admittedly less glamorous but essential part of the modern world. The contrast between modern software for automation and modern software for everything else indicates we are slogging through the critical phase of innovation latency.

The stagnant days of factory automation

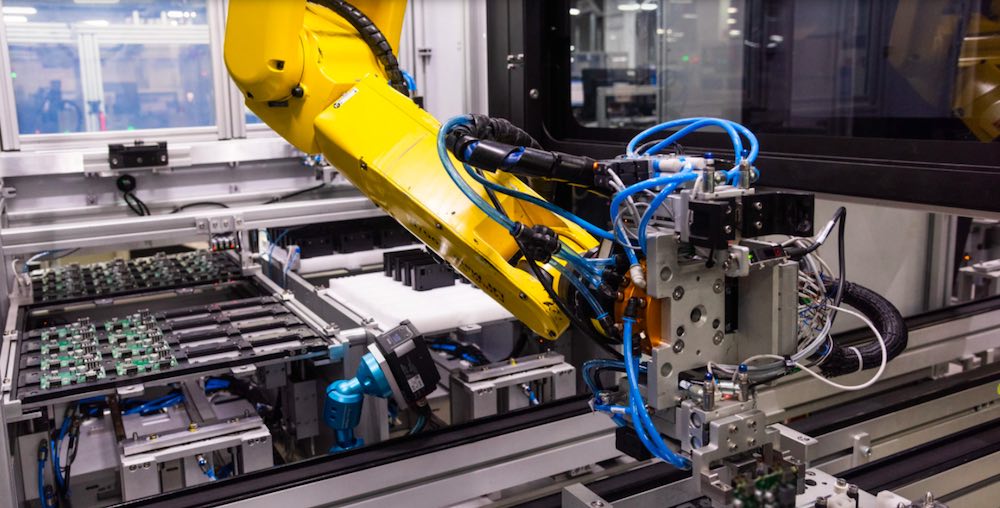

Smartphones, vaccine chillers, airplanes, and everything in between all require a lot of putting parts together. This is done by people and robotic systems. We’ve had modern robot arms with six electrically-actuated joints and logic controllers working in factories since the mid 1970s. This invention gave manufacturing engineers the power of repeatable, arbitrary motion – the ability to move anything in any way with all six degrees of freedom. After 50 years of progress, the price for such arms has decreased dramatically, and they run with minimal maintenance for tens of millions of cycles.

This combination of purchase cost and longevity means that usage has expanded, but a manufacturing engineer today is largely in the same spot as one 50 years ago, moving things very repeatably. Cost to configure is the key metric. This is far from sufficient for the majority of assembly tasks needed today.

Why? What exactly is missing? What are the page numbers the Gutenberg press of robotics waits for? My colleagues and I see two areas where innovation is most needed: first, localized feedback from the process; second, efficient communication of intent.

Latency #1: Local feedback

Local feedback means reactive systems using force, touch, vision, and other sensory input to perceive an actual environment and map it to a simulated one. For mobile robots, Boston Dynamics has long led the world in controls, even while interacting mostly with the ground. Bringing Spot-like, real-time reactivity to the varied terrain of the factory remains the largest opportunity in the industry.

We see steady progress in machine vision, but the advanced methods used in other domains such as autonomous driving are very slow to diffuse into the factory. The work of generalizing those methods is barely started: cars are seeing bicycles at 100 meters in light fog while most factory vision systems are only seeing clean geometric edges under high-contrast lighting.

Related: The state of industrial robotics: challenges & opportunities

Factories do rely on some vision and depth cameras now, but ultimately force and touch feedback will be more valuable. People put things together primarily using these senses, and so will automation, but we’ll need to move the sensing closer to the process. For example, you can get some force signal from the motor joint currents in any arm, but move physically closer to the process (closer to a part being handled) with a load cell on the wrist or a sensor in a griper jaw, and you are also much closer to knowing what’s happening. Tactile sensing progress remains plateaued even though every end effector on the planet – from those on simple box movers to surgical robots – would benefit from an appropriate synthetic thumb.

Latency #2: Communication of intent

Communication of intent means any senior technician should be able to confidently interact with the factory environment. In most factories today, you won’t get much done without a core set of specialists being there at the same time, including a controls, systems, process, and mechanical engineer as a minimum. This is because each kind of software is tied only to the hardware for which it was created to run with almost no abstraction

In every machine shop in the world, sharp spinning metal is plunging straight into a solid block of slightly softer metal. If you have done some machining yourself, you may recall that first day when you stood with slightly-fogged glasses in front of the spindle and dispelled your disbelief by slowly turning a chattering knob. Your gut instinct that day was right: machining barely works. The process window in which to not dull the mill or warp the part is very small. What was once a careful craft became a stable routine by the way that a machinist can communicate with the entire shop.

Machining is now done with CAM – a happy synergy where the machinist is shown the big picture, then chooses the choreography while the software takes care of thousands of lines of G-code details. Why are we not here with robots? Why are people routinely editing G-code-like files directly? We can wield a carbide tip at 10k RPM in 6-DoF routinely, but when it comes to the choreography of an assembly we are out of luck. Why is the latency so prominent in automation, but not machining? One answer might be indicated by the rise of co-bots.

Cobots gained importance not just because they are more friendly when they bump into you but because they are more friendly when they need to be re-programmed. Leaders like Universal Robots, and most major brands, have been experimenting with new ways for people to communicate intent, for example, by physically moving the arm to locations instead of jogging. As the speed, stiffness, and accuracy of cobots continues to increase, the domain of ‘communication friendliness’ also increases. An integrated platform extends this communication friendliness to any assembly system.

Assembly is not as linear as a (factory) line

Manufacturing lines become lines – a linear flow – not strictly because of the build order, but because there is no maintainable way to handle the variability that is a natural part of any assembly. In a typical deployment, structured text is compiled into an instruction list, while in parallel a digital twin robot’s moves are compiled into something like G-code. Change anything and you’ll need to recompile. There is no line-level data structure and the tools have come to define the craft.

Related: Digital twins, AR/VR bring simulation benefits to industrial automation users

If the invention of industrial robotics is to realize its full potential, the software architectures and algorithms used in other domains must be mapped to the factory. This requires a commitment to long-term innovation, which is not accessible to many in the industry working within structurally low margins. Technology companies newly focused on industrial automation can complement the efforts from both OEMs and CMs to push through the latency and move the automation frontier for the benefit of all.

About the author

Eric Johnson is the director of innovation at Bright Machines where he is focused on developing technologies for software-defined automation. With two decades of experience as a mechanical engineer, he’s worked on a range of challenges, including world-first products at Google and Mojo Vision. Johnson’s interest in using modern manufacturing technology to transform an industry began when he co-founded Skyline Solar, and it continues to drive his work today.

Tell Us What You Think!