Coke vs. Pepsi. Nike vs. Adidas. Great product innovation does not come without a debate, even more so when products or companies in question are closely aligned. As the discussion for the future of the autonomous vehicle sensors continues to evolve, discussions surrounding technologies become more intense.

A recent stand-out was during Tesla’s Autonomy Day in April where we heard broad claims on the industry, and within it was a key discussion point – LiDAR vs. radar.

How LiDAR works

A daily discussion in automotive news is what are LiDAR and radar, and more importantly, what do they do? What do they mean for autonomous driving? In reality, they are both sensors but very different technologies.

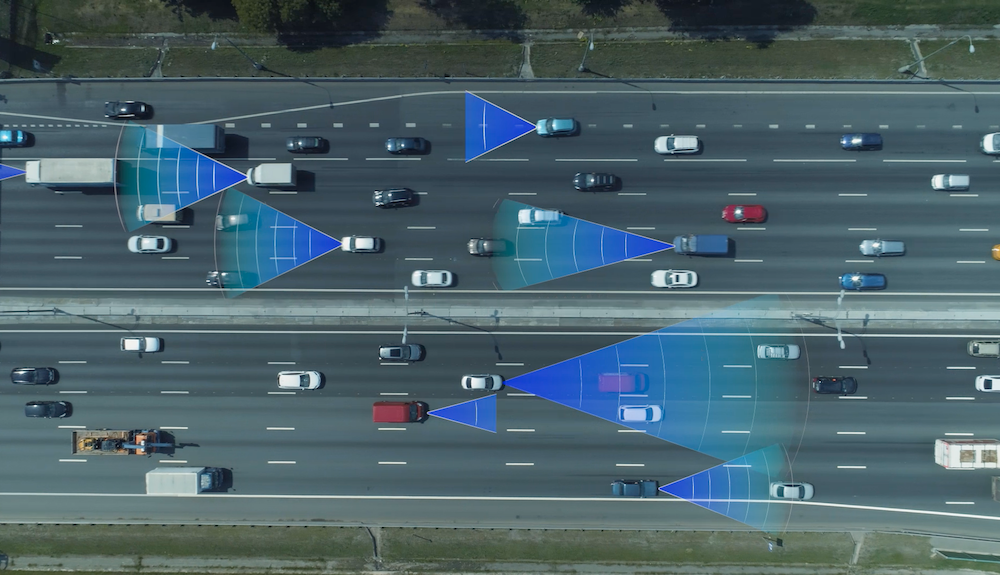

Currently, most autonomous vehicle sensor suites use two or three types of sensors: camera, radar and in some (more expensive) cases LiDAR. The reason several technologies are used is because each has strengths and weaknesses, and the combinations complement one another. When used independently, no sensor is completely reliable.

Sometimes one autonomous vehicle can include upwards of a dozen cameras. Although they have great resolution and the ability to see details clearly, cameras and weather do not mix, which is vital for a majority of drivers and vehicles. The component of clarity that cameras offer is something LiDAR nor radar lack, but they still are not reliable enough.

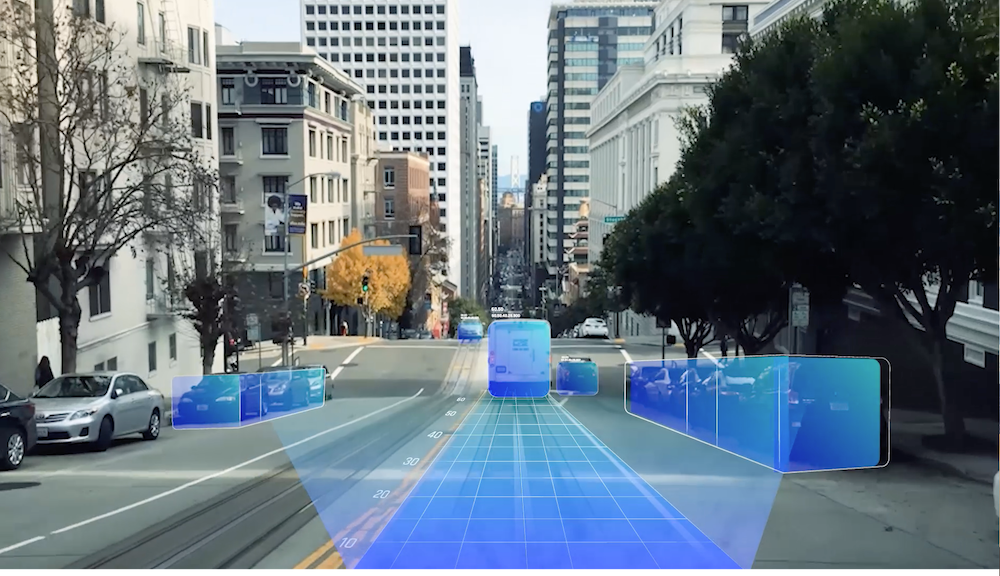

LiDAR emits rapid laser signals that bounce back from obstacles they encounter. Once the signals bounce back, the sensor collects the amount of time it took for the signal to bounce back to determine the distance between where it is positioned and the obstacles ahead that it may encounter. Like a camera, LiDAR is limited by weather conditions, and the price point has already proven to be an issue for creating an affordable mass-market product. LiDAR systems on the market have starting costs of around $1,000 to upwards of $75,000 for the technology alone.

Related: Waymo LiDAR sensor now available for robotics companies

How radar works

The main differentiator of radar is that it uses radio waves instead of a laser to sense objects. This gives radar the ability to measure velocities of surrounding objects directly, offering a critical advantage in the automotive environment. LiDAR systems would need to rely on a very complex analysis to achieve the same outcome. In addition, when radar waves travel through the air, less power is lost compared to the light waves, meaning radar can work over longer distances. Radar has also already been in use for years for powerful military purposes in airplanes and battleships.

Radar maintains functionality across all weather and lighting conditions. However, the technology has traditionally been limited by low resolution, a disadvantage that made radar susceptible to false alarms and unable to identify stationary objects. Until now, that is. The technology has evolved into high resolution capabilities in recent years.

Arbe’s radar technology has overcome resolution limitations by developing a radar with ultra-high-resolution functionalities to sense the environment in four dimensions:

- Distance

- Horizontal and vertical positioning

- Velocity

This feature could reposition radar from a supportive role to the backbone of the sensor suite in autonomous vehicles.

Targeting market needs

As the autonomous industry continues to evolve, and conversations continue to develop, sensor companies are paving the way for the revolution of vehicle autonomy and helping to redefine road safety. Self-driving cars are no longer just a vision for the future, they are already shaping the automotive industry and could soon arrive on our roadways.

To make those visions reality, however, we have to be realistic. Through a combination of high-resolution radars and cameras, autonomous driving can be achieved cost-effectively, offering a safe and reliable solution.

About the Author

Kobi Marenko is the Co-founder and CEO of Arbe, a leading company in the radar revolution that will make autonomous driving safe and affordable.

Arbe’s Phoenix radar demonstrates ultra high-resolution 4D imaging radar. Phoenix tracks and separates objects in azimuth, elevation and velocity, applying post-processing and SLAM simultaneously.

How can AI be applied in autonomous vehicles for better performance