|

Listen to this article

|

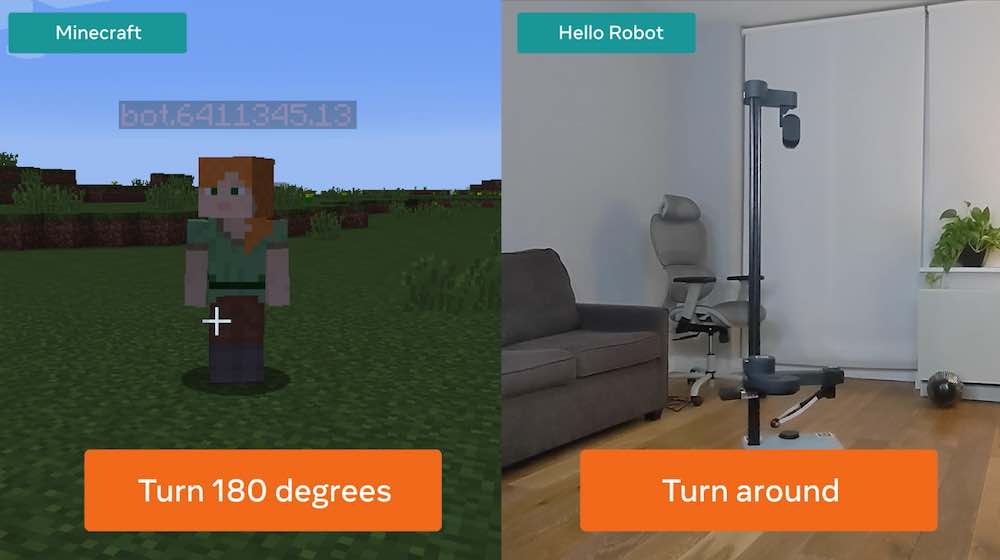

Droidlet lets researchers build robots that accomplish tasks in the real world (right) or simulated environments. | Credit: Facebook

To help researchers build more intelligent robots, Facebook has created and open-sourced a robotics development platform called Droidlet. Facebook researchers wrote in a blog today that Droidlet is a modular, heterogeneous embodied agent architecture, and a platform for building embodied agents, that sits at the intersection of natural language processing, computer vision, and robotics.

Facebook claims it simplifies integrating a range of machine learning algorithms into robots to facilitate rapid prototyping. Droidlet users can test different computer vision algorithms with their robot or replace one natural language understanding model with another. Facebook said Droidlet enables researchers to build agents that accomplish tasks either in the real world or in simulated environments like Minecraft or Habitat.

“There is much more work to do – both in AI and in hardware engineering – before we will have robots that are even close to what we imagine in books, movies, and TV shows,” Facebook researchers wrote. “But with Droidlet, robotics researchers can now take advantage of the significant recent progress across the field of AI and build machines that can effectively respond to complex spoken commands like “pick up the blue tube next to the fuzzy chair that Bob is sitting in.”

Rather than considering an agent as a monolith, Facebook said it considers a robot to be made up of a collection of components, some of which are heuristic and some learned. The company said this heterogenous design makes scaling tractable because it allows training on large data when large data is available for that component. The components can be trained with static data or with dynamic data.

Facebook said the high-level agent design consists of these interfaces between modules:

- A memory system acting as a nexus of information for all agent modules

- A set of perceptual modules (object detection or pose estimation) that process information from the outside world and store it in memory

- A set of lower-level tasks, such as “move three feet forward” and “place item in hand at given coordinates”

- A controller that decides which tasks to execute based on the state of the memory system

Droidlet comes with an interactive dashboard that includes debugging and visualization tools, as well as an interface for correcting agent errors on the fly or for crowdsourced annotation. Facebook added that the Droidlet platform will become more powerful over time as it adds more tasks, sensory modalities, and hardware setups, and as other researchers and hobbyists build and contribute their own models.

“The path is long to building robots with capabilities that approach those of people, but we believe that by sharing our research work with the AI community, all of us will get there faster.”

You can read more about Droidlet here or check out the platform’s GitHub page.

Tell Us What You Think!