|

Listen to this article

|

It has been over fifty years since Shakey the robot started autonomously navigating around its environment to the tune of Take Five. Yet to this day, getting machines to move around without hitting obstacles remains a major area of research and development, from the major tech companies developing autonomous vehicles to hobbyists trying to drive their creations around their homes.

Through various client projects, PickNik has identified eight key areas that can affect the overall difficulty of autonomous navigation:

- Mode of mobility

- Agility

- Robot shape

- Planning space

- Obstacles

- Localization

- Number of agents

- Speed

Mode of mobility: walk and/or roll

Our cars don’t fall over when we turn them off, but I fall over if I fall asleep standing up. This is one of many reasons why wheels are generally preferred over legs as the mode of locomotion in robotics. It is much simpler to command two motors to rotate at a particular velocity than it is to do the complex kinematics required to figure out where the best place to step is to move forward while also maintaining balance. That’s not to say that there haven’t been incredible advancements in legged systems in the past few years, but it does make the problem harder. On the flip side, it’s much easier for me to go up stairs than a Roomba.

Agility: go where you wanna go

The best athletes can move in any direction almost instantaneously. They can dodge an opponent, do a quick side step and turn around nearly effortlessly. For robots, autonomous navigation is easiest when there aren’t restrictions on which direction they can move at any particular time (aka holonomic robots). Willow Garage’s PR2 was nearly holonomic in that it had to rotate its casters internally first before moving in any direction.

More common, however, are differential drive robots, with two powered wheels, allowing the robot to move forward, backward and turn in place. Such a robot is not holonomic because it cannot move directly sideways. The restricted movement model slows down some maneuvers, but still gives enough flexibility to handle many situations. Car-like steering systems introduce an additional layer of complexity because cars can’t turn in place, which is why parallel parking is such a pain and why three point turns were invented. Legs, on the other hand, can provide a lot of additional agility, although that freedom to move also comes with a lot more ways to fall over.

Robot shape: the shape of you

The size and shape of the robot also affect how hard autonomous navigation is. Circular robots are common because they can rotate in place and be guaranteed not to hit anything. The same cannot be said for other shapes. A square robot directly next to an obstacle can’t turn. There can also be situations where robots can fit through doorways when at one orientation, but not fit through when turned another way.

One helpful rule of thumb for autonomous navigation in environments designed for humans is that most doors in the United States are designed to be ADA Compliant and, thus, designing a robot greater than 32 inches/81 cm wide can cause problems. ADA Compliance also means wheeled robots won’t be stymied by stairs blocking their navigation at every turn.

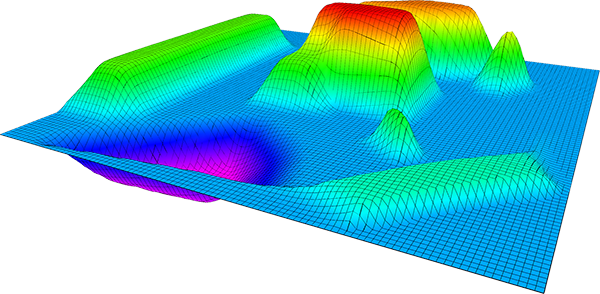

Autonomous navigation planning space: flatland

Most robots operate on the two dimensional plane, where the position can be described using an x and a y coordinate and an orientation. This is sufficient for many interior places like warehouses or schools, and lends itself to very efficient representations of the environment. However, the world is not flat. A flat representation cannot represent all the places wheeled robots can drive, like a multistory parking garage.

Furthermore, not all robots can operate indoors on the floor. Operating outside often requires knowing elevation as well; the shortest path may go over a mountain and the easiest path goes around the mountain. Certain applications, namely aerial drones, also need a third dimension for a z coordinate, and need to track the robot’s orientation with a more complex representation, like roll, pitch, and yaw.

Obstacles: avoidance mechanisms for autonomous navigation

The easiest way to navigate is in a feature-less plane, which is why people often learn to drive in empty parking lots. However, most mobile robots need to avoid the obstacles around them, if for no other reason than roboticists hate getting their shins bumped all the time.

This is relatively simple if the robot knows its entire environment beforehand, but the introduction of dynamic obstacles means the robot will need a sensor suite to detect whatever obstacles are thrown at it. The standard for many years was to use a planar laser scanner, but that only worked to avoid obstacles that were at the exact height of the laser. The real world, as it turns out, has more than two dimensions. Thus, robots that relied on laser scanners could avoid table legs, but would often hit the table top, or run over small obstacles like toes.

Some applications use standard RGB cameras, but since 2010 and the introduction of the Microsoft Kinect, three-dimensional sensors have become fairly standard for robots. They recognize many more obstacles with high accuracy, but come with their own calibration complexities and limitations. Sensing an obstacle is also only the first step, after which the robot may need to recognize it, predict where it’s going and plan around it.

Localization: a maze of twisty little passages, all alike

While having no obstacles around the robot makes it easier to not hit anything, it makes it harder to know precisely where you are. Even adding GPS will only give your position within a few meters. Instead, most robotic systems rely on a system of localization based on existing static obstacles like walls. This can be easier when a map of the environment is known beforehand, otherwise you have to do simultaneous localization and mapping, which is a complex field unto itself.

However, even when you know the map fully, the problem of localization can still be difficult, depending on the accuracy of the sensors and how unique the environment is, for example, if the robot must navigate through an environment full of visually similar aisles.

Number of agents: multiplayer autonomous navigation

Autonomous navigation of one robot can be hard enough. You could also make a system of multiple robots navigating around where each robot pretends it is the only robot. However, there are many benefits to getting a fleet of robots to communicate with each other and coordinate their motion.

Where it gets really fun is when the other agents that the robot must navigate around are people. The difficulty of that can vary, depending on whether the people are trained to be around robots, or they’re unsuspecting customers who’ve never seen a robot before.

Speed: of the essence

The last component to touch on that can make autonomous navigation significantly harder is speed. The difficulty is not only a function of robot speed (Shakey goes a bit slower than Tesla’s “Autopilot”), but also the computation availability. It’s one thing if you’re trying to drive an autonomous vehicle with the full force of a Fortune 500 cloud infrastructure behind you; it’s another if you’re trying to run the whole thing on an Arduino board.

Moving at greater speeds without having adequate processing means can lead to one of the least desirable outcomes in navigation: collisions. In the name of safety, it is often better to execute slower moves that you can guarantee will not collide with obstacles than to move faster on a trajectory that might collide with something.

The eight degrees listed above represent some broad ways in which robot navigation can be difficult. As always, the devil is in the details. The specific context in which your robot operates will present unique challenges that will require custom domain-specific solutions. At PickNik, we’ve expanded our navigation capabilities to provide services that fit your needs.

About the Author

About the Author

David Lu, PhD, is a senior navigation roboticist at PickNik. He received his PhD in Computer Science at Washington University in St. Louis focusing on contextualized robot navigation, and a Computer Science B.S. at University of Rochester.

Lu has been a leading member of the ROS community for over a decade, including maintaining the core ROS navigation stack and co-chairing ROSCon 2019. He was a key developer of the navigation capabilities of Locus Robotics’ fleets of warehouse robots and Bossa Nova’s shelf-scanning robots, and has also collaborated with Willow Garage, Walt Disney Imagineering R&D, SWRI and NASA.

Tell Us What You Think!