|

Listen to this article

|

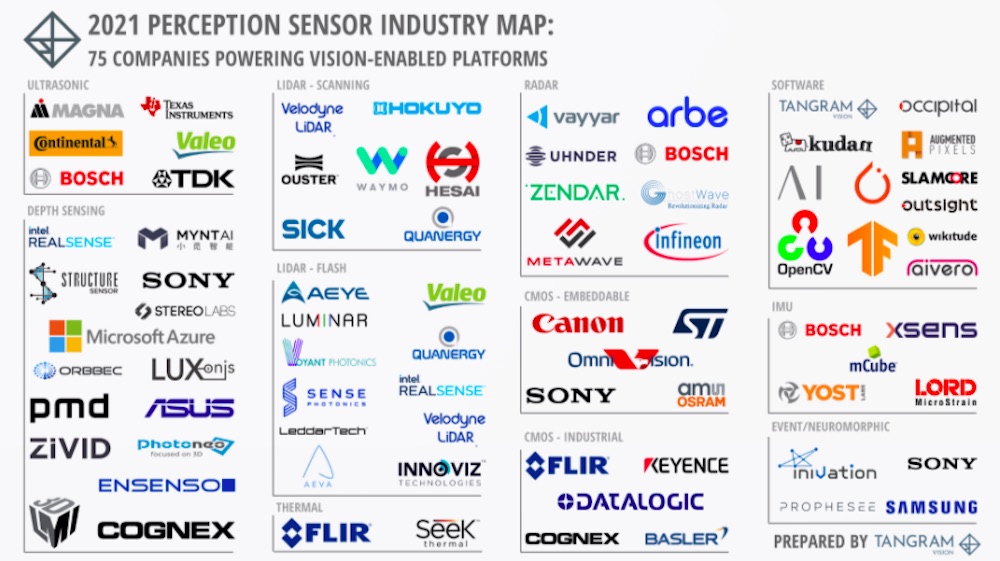

Perception sensor industry market map. Credit: Tangram Vision

Over the past decade, the perception industry has exploded with new companies, new technologies, and the deployments of millions of sensors in industries ranging from the traditional (automobiles) to the cutting-edge (space travel).

While incumbents have locked up majority market shares in some segments, other segments have seen the rise of dominant new players that have become suppliers to some of the world’s largest companies.

Yet it’s clear that it’s still early days for perception. New sensing modalities and innovative software platforms are changing what perception makes possible, therefore opening up opportunities for even more new companies to challenge incumbents and other startups alike.

Tangram Vision‘s intention with this industry map is to paint an easily digestible picture of where the industry stands today. It shows the primary players in the key perception sensing modalities, as well as the software companies that provide the infrastructure and libraries that are most commonly used to build perception-powered products.

Ultrasonic

Much like radar, ultrasonic sensors send out a signal and time how long it takes for the signal to return after reflecting off a nearby surface. Unlike radar, however, ultrasonic uses narrowly focused sound waves (typically between 30 kHz and 240 kHz). It is typically used for high-fidelity near-range sensing, and is often found in automotive applications like advanced driver assistance systems (ADAS) and parking aids.

The ultrasonic sensing landscape is dominated by incumbents from the automotive world. The companies listed in the industry map are:

Depth

Depth sensors are used to capture 2.5D and 3D imagery. There are many different modalities within depth sensing, including structured light, time-of-flight, stereo, and active stereo sensors. In addition, depth sensing can be found across a range of applications from consumer to industrial.

The depth sensing landscape includes large incumbent players, but has also seen a significant amount of startup activity over the past decade. The companies represented on the industry map are:

Consumer

- Intel RealSense

- Structure Sensor by Occipital

- Mynt

- StereoLabs

- Sony (formerly SoftKinetic)

- Microsoft Azure Kinect

- Orbbec

- Luxonis

- pmd

- ASUS

Industrial

LiDAR

LiDAR (light detection and ranging) works similar to ultrasonic and radar sensing in that it sends out a signal and times how long it takes for the signal to reflect off a nearby object or surface and return to the sensor. Unlike sound-based ultrasonic and radio wave-based radar, LiDAR uses light. Perhaps no other perception segment has seen as much new company formation and startup activity as LiDAR over the past decade. The advent of the autonomous vehicle and drone markets drove rapid market and technology development, resulting in startups becoming incumbents (Velodyne and Ouster, for instance).

In the industry map, we consider the two primary modalities of LiDAR: scanning and flash. Scanning LiDAR units utilize a laser emitter and receiver spinning rapidly to capture a 360° view of what is around the sensor. Newer developments in scanning LiDAR also use MEMS mirrors or solid-state beam steering to achieve similar results with less mechanical complexity. Flash LiDAR units use a fixed laser emitter and receiver with a wide field of view to capture large amounts of data with no need for mechanical parts.

The rapid growth of the LiDAR market tracks the ever growing interest in autonomous vehicles and mobile robots. However, slower-than-expected development of the AV and robot markets has created a ripple effect into the LiDAR industry, resulting in many startup failures and lots of M&A activity. The survivors of this first wave of failure and consolidation are becoming dominant incumbents for scanning LiDAR. A new wave of flash LiDAR companies is emerging as well, many with a focus on ADAS applications in pursuit of large automotive contracts. In turn, many of the scanning LiDAR incumbents are launching their own flash LiDAR units to compete.

Scanning

Flash

- AEye

- Luminar

- Velodyne Lidar

- Voyant Photonics

- Quanergy

- Sense Photonics

- Valeo

- Intel RealSense

- LeddarTech

- Aeva

- Innoviz

Thermal

Perception systems often rely heavily on visual data; however, visual data isn’t always available (for instance, foggy and unlit scenarios). Thermal cameras can be an option to provide sensory information when visual sources fail.

Radar

Like the scenario described above with thermal imaging, radar can provide sensory data when visual sources fail. Consider, for instance, an autonomous vehicle that relies on LiDAR and cameras in dense fog. Because the fog occludes any visual references, that AV won’t be able to operate. With radar, however, it could. Radar has been deployed effectively in automotive settings since Daimler Benz first launched its Distronic adaptive cruise control system in 1999.

CMOS

The most ubiquitous perception sensing modality is CMOS cameras. CMOS cameras use the same imaging technology that is found in mobile phones, digital cameras, web cams and most other camera-equipped devices. There’s a huge diversity of available configurations, spanning resolution, dynamic range, color filtering, field of view, and more.

In perception applications, CMOS cameras can be used on their own, with each other, or with other sensing modalities to enable advanced capabilities. For instance, a single CMOS camera can be paired with a machine learning library to perform classification tasks for a bin picking robot. Two CMOS cameras can be used in a stereo configuration to provide depth sensing for a robot, or even an adaptive cruise control system on an AV. CMOS cameras are commonly paired with IMUs to enable visual-inertial navigation for robotic platforms as well. In other words, perception systems almost always include CMOS cameras.

Depending on system design, CMOS cameras can be directly embedded into a device, or externally attached. The former is common for mobile robots and autonomous vehicles, while the latter is frequently found with machine vision applications for industrial robotics.

While there are hundreds of CMOS component and module vendors, we have chosen to include the most significant participants in the perception sensing world.

Embeddable

Industrial

IMU

Another non-visual sensing modality, IMUs (inertial measurement units) sense kinetic motion. Taken alone, this information can only provide the perception of random movement in space. In concert with a visual sensor like a CMOS camera or depth sensor, a visual-inertial sensor array can provide highly accurate location and movement data.

Like CMOS cameras, IMUs can be supplied as embeddable modules, or as external units.

Event/Neuromorphic

For the past decade, the perception world has been abuzz with interest around neuromorphic cameras (also called “event cameras”). These cameras work on an entirely different principle than other cameras. Instead of capturing and sending an entire frame’s worth of data, these cameras send data on a pixel-by-pixel basis, and only for those pixels where a change has occurred. As a result, they can operate at much higher speeds, and can also transmit much smaller amounts of data as well. However, they are still difficult to source at scale, and expensive. To date, there has only been one consumer application of event cameras, and it was not a success. Time will tell if these experimental cameras escape the lab and become a regular part of real world perception systems.

Software

A perception system is more than just sensors; it’s also the software required to do something with that sensor data. Sensor software can operate at lower levels in the stack – for instance, Tangram Vision’s software ensures that all sensors are operating optimally and transmitting reliable data. It can also operate at the application level – an example would be using SLAMcore’s SLAM SDK to power a navigation system for a warehouse robot.

Within the perception software ecosystem, there has been heated competition among SLAM providers over the last decade. This has been encouraged by increased investment in both robotics, as well as XR technology like virtual reality and augmented reality. Consolidation has occurred, with many teams being acquired by the FAANG companies as they plan for future consumer products like AVs and VR headsets.

- Tangram Vision

- Occipital

- Kudan

- Augmented Pixels

- Arcturus Industries

- PyTorch

- TensorFlow

- SLAMcore

- Outsight

- OpenCV

- Wikitude

- Aivero

Conclusion

The perception industry is only at the beginning of its growth curve. Recent advancements in sensor modalities, embedded computing and artificial intelligence have unlocked new capabilities for more platforms that will require perception sensing and software for operation.

As has been the case for the past decade plus, continued consolidation and new company formation will be a constant feature of this market. Here at Tangram Vision, we plan to revisit and update this market map once or twice a year to keep pace with the changes.

Editor’s Note: This article was republished with permission from Tangram Vision.

About the Author

Adam Rodnitzky, is the COO & Co-Founder of Tangram Vision. The San Francisco-based company specializes in assisting robotic companies integrate sensors quickly and maximize uptime.

Prior to launching Tangram Vision, Rodnitzky was a mentor at StartX, a seed stage accelerator focused on commercializing startups connected with Stanford University, and VP of marketing and GM of Occipital.

Tell Us What You Think!